Is AI driving digital transformation? Or is digital transformation driving the adoption of AI? Are they synergistic, or just getting in the way of each other?

Numerous studies show that large organizations are realizing only limited success in digital transformation projects. For example, BCG and McKinsey research have put success rates in the 30% range. [1][2] The pandemic has accelerated some aspects of digital transformations, since the ability to work remotely required new tools, technology, and infrastructure. However, it is still unclear whether or not that acceleration is leading to the success of transformation efforts beyond the ability to work remotely.

In many cases, acceleration has simply meant getting a program initiated and deployed quickly, but at the cost of increased technical debt and less attention to foundational processes and data quality. As one large global professional services firm tech leader explained to me, “We’ve done two years of transformation in a matter of weeks.” That might sound good, but in reality, it is not possible to do so without cutting some corners.

The stats for the success of AI programs are not great either. According to 2021 research by IBM cited in a recent Sloan Management Review article on AI[3], just over 20% of surveyed organizations have deployed AI throughout the enterprise. Other research cited in the article indicates that 40% of organizations that have made significant investments in AI have not realized business benefits. In other words, 60% of organizations that have significant investments in AI have realized business benefits. Some pundits claim that AI accelerates and improves the success of digital transformations.[4] However, a 2018 McKinsey study reported that only 23% of organizations with successful digital transformations are leveraging AI technology.[5] The majority of successful transformations appear to have been so without explicitly leveraging AI technology.

The Problem with Surveys

I recently read a white paper about AI successes in B2B transformations. One organization was cited as having achieved tremendous value from AI in the personalization of B2B communications. The company is a past client of my firm, and I have been in communication with people who are responsible for B2B personalization in the company. However, according to one of my contacts, the organization is in fact still struggling with personalization. This is an example of the “front line paradox” where “Front line employees are often the first to sense impending change but the last to be heard within an organization”[6]. They are also the ones who have to deal directly with the challenges and see the impact of newly deployed technologies and work processes. To paraphrase Stanford professor Robert Burgelman and Andy Grove, the late CEO of Intel, front-line employees can feel the winds of change because they spend time outdoors where the stormy clouds of disruption rage[7].

The message is that the information that senior leadership is communicating to the press or derives from surveys and interviews may not necessarily line up with the experience of those who interact with customers or use new solutions to serve customers. In this instance, the reality on the ground was very different from what was communicated from the top of the hierarchy.

One Common Theme

An article in Toward Data Science published in November 2020[8] asserts that “Digital Transformation is one of the most critical drivers on how companies will continue to deliver value to their customers in a highly competitive and ever-changing business environment.” It goes on to say, “Artificial Intelligence (AI) has been recognized as one of the central enablers of digital transformation in several industries.”

Considering the low success rates of digital transformations and the obstacles of AI operationalization, it is unclear how one high risk, low success type of program can be a critical enabler of another high risk, low success rate type of program. It’s similar to the strategy of combining two money-losing ventures to create one profitable one. Could it work? Sure. Is it likely to? No.

The author of the article went on to articulate the importance of organizing and structuring data to support broad transformations and specific AI initiatives. “There is no point in seriously talking about Artificial Intelligence if you do not have your data organized.”[9] The same of course can be said about overall digital transformations. A digital transformation is a data transformation. Organizations need to slow down and address fundamental issues around data before they can accelerate AI programs or be successful with larger transformation initiatives.

Broad Scope with Narrow Interventions

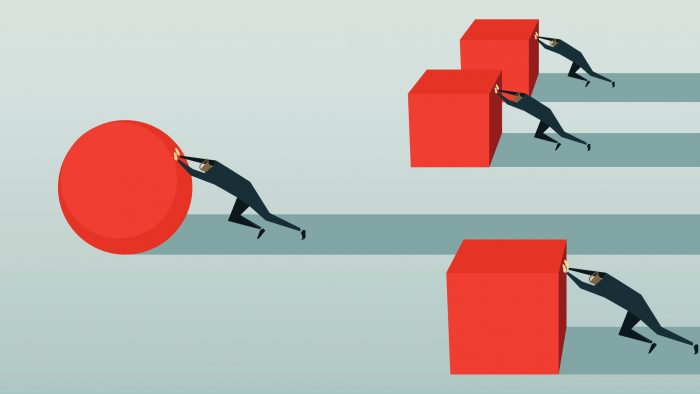

One interesting dichotomy is that digital transformation is typically broad in scope, cutting across multiple departments and processes but successful AI projects are narrow in scope, addressing specific processes and incremental improvements. Digital transformation entails a holistic view of value chains, while applying AI to departments and individual processes can lead to fragmented efforts disconnected from larger enterprise information flows. Getting the most value from distributed experiments with AI requires the ability to centralize lessons learned and standardize on approaches that have realized value.

Balancing these two perspectives requires that leaders take a holistic view of the overall transformation while zooming in to the details of the dozens or hundreds of individual processes that support business objectives and outcomes. Going from a macro view down to the micro and back again is part of a successful transformation of any type. Bringing in additional technologies as part of the transformation where the organization may lack maturity adds complexity and increases the number of dependencies on foundational processes, data architecture, and data quality. For example, there is no point in investing in personalization technologies if there is little understanding of customer needs across segments.

Many Unknowns, Many Assumptions

The bottom line: don’t add too many unknowns to your transformation program. AI projects require iterative testing and evolution of supporting processes and clean, consistent, well-architected data is the price of admission. Don’t assume that the data is in place and usable for the target process, and don’t take the promises of vendors or status of program leaders far removed from the front lines as reality. The best way to determine whether supporting processes and data are at the level required for success is through competitive benchmarking, internal benchmarking, heuristic evaluations, and maturity assessments. You need objective metrics to know if your data is adequate.

A heuristic (collection of best practices and rules of thumb) evaluation can provide a snapshot of how well the organization is doing on current efforts. What does the organization have to work with? Are foundational processes and data quality strong? Or does strengthening the foundation require significant time and effort? A maturity assessment cuts across multiple dimensions that may appear beyond the scope of the domain but would impact downstream processes for a given area. For example, product data maturity would include an understanding of whether service level agreements for suppliers and vendors include data quality measures and remediation processes. Vendors are part of the information supply chain, and problems at the source can negatively impact many downstream processes and dramatically increase costs.

AI can provide competitive advantages, speed time to value, reduce costs, and improve the customer experience. These are all exciting prospects. However, there is no simple way to achieve these impressive outcomes without addressing the enabling steps, which are often considered boring. These include upstream supporting processes, data, architecture, governance, and change management. All too often, these are assumed to be in place or to be someone else’s issue. Or worse, they are not considered to be important enough to address.

As a case in point, according to a 2020 survey by PWC, over 40% of execs intended to deploy AI to improve productivity and efficiency, but only 13% said that standardizing, labeling, and cleansing data for use in AI systems was a top priority for them in the coming year”[10]. That is a very telling statistic and reveals a lack of understanding of the critical role of standardized, labeled (well-architected), clean data. Because dependencies multiply once AI is embedded in digital transformation programs, investments in the foundational work cannot be pushed down the road or tackled in a later phase.

The Story on the Front Lines

Above all, get the real story from the front lines of the organization regarding the progress of and benefits from AI projects and digital transformation programs while there is time to course correct. Too many organizations engage in “success theatre” where the reality or failures and setbacks are not communicated to the C-Suite. In some cases, the impact on the careers of leaders is insignificant, because by the time the failures show up in company performance, these individuals have moved on to the next opportunity. Therefore, the motivation to be transparent and accountable is not always strong.

The success or failure of AI and digital transformation is existential for many organizations, so getting to the truth is essential, but often there is a disconnect between stated goals and a realistic assessment about what it takes to get there. In one enterprise, cutbacks in funding of the digital transformation meant that key roles were unfilled, and programs were under-resourced. But the timelines and program objectives did not change. The front line saw the coming train wreck, while leadership did not. Neither AI nor digital transformation is a magical solution that supersedes the fundamentals of our digital world. Rather, they are both solutions that depend on developing those fundamentals, a critical process that cannot be ignored if the organization is to flourish.

Ten Tips for Successful AI-Enabled Digital Transformation

- Consider the data needed to support the effort before beginning the program. Engage data governance and quality experts in the organization to outline the data needed to support the transformation. If migrations or significant quality efforts will be required, they need to begin sooner – not wait for the end of the technical development.

- Pay attention to what front-line workers report about program performance and how it impacts their day-to-day work. Develop channels to gather unbiased feedback.

- Be mindful of the larger enterprise objectives while drilling down into the details of the effort – map the upstream and downstream impacts and dependencies (even at a high level). For example, when optimizing product-related content for SEO for an e-commerce site, consider how that content will need to serve other audiences – customer self-service, call centers, syndication partners, embedded service content in the product itself. Only consider SEO is short-sighted.

- Identify baselines for the processes that will be impacted. These include existing practices and how well they comply with known industry practices (heuristics) and standards. Ensure that the processes and tools to monitor impact are in place.

- Build a communications plan that keeps people engaged– promotes wins, reinforces the need for “the boring parts” like improving processes and intentional change management. Bring the right people to the day-to-day operational decision-making and communicate outcomes to execs.

- Be mindful of where technical debt is being increased (by not documenting work or taking shortcuts to launch). Often the things left for later never happen. Too much tech debt will hobble your program

- Do not expect technical solutions to nontechnical problems, for example fixing a set of manual and chaotic processes for customer onboarding. There may be technical pieces but start with the human understanding of the issues and why certain processes are in place before applying technology.

- Calibrate the program plan to the maturity of the organization. Upstream dependencies are not always apparent, and a certain level of maturity will be needed to be successful. Personalization at scale requires foundational capabilities in content operations, customer journey modeling, knowledge processes, and product information management. A lack of capability in one area will impact the overall program

- Plan for adequate resources to prepare content, data, and knowledge for the transformation. This requires a deep dive into information processes and lifecycles including sources, uses, provenance, rights, quality, purpose, systems of record, and consuming systems (both internal and external).

- Continually update and refine the roadmap as new dependencies resourcing constraints arise. Plans should continually evolve as the program encounters new issues, solves problems, and adapts to changing customer needs. If an element of a program loses funding, that impact needs to be captured and if necessary, timelines adjusted.

In the coming years, we will hear less about AI as a standalone program or project. It will be increasingly woven into the organization’s infrastructure. Digital transformations will be ongoing and there will be an increasing focus on Cognitive Technologies and Intelligent Assistants to improve information access for stakeholders of every kind. AI can be a differentiator and can accelerate digital transformations as long as attention is paid to the fundamentals.

Notes

[1]Flipping the Odds of Digital Transformation Success

[2]Unlocking success in digital transformations

[3]Achieving Return on AI Projects

[4]10 Ways AI Can Improve Digital Transformation’s Success Rate

[5]Unlocking success in digital transformations

[6]Gain Competitive Advantage by Transcending the Front-Line Paradox

[7] ibid

[8]How AI and Digital Transformation will change your business forever

[9] Ibid.

[10]Moving AI Forward: Why You Need to Slow Down Now to Scale Later