A/B Testing, in and of itself, is a data-driven approach. However, where regular split testing is like a paper airplane (unpredictable and easily pushed off course), you now have the ability to launch A/B tests like a space shuttle (fast, powerful, intelligence-gathering, and mission-completing). The ability comes at the intersection of big data, behavioral analytics, and multi-touchpoint digital conversations.

Countdown: Before the test

When you first decide to start A/B testing a webpage, which elements do you test? Some say the copy, others push for the CTA button. Instead of basing this critical decision on intuition, you should take the data-driven approach to A/B testing and base it on facts to determine which elements to test, why they are important, and how the various potential results can impact page performance.

By closely monitoring and analyzing in-page visitor behavior before launching a test, you can gather the data required to define testing parameters.

For example, if aggregated visitor behavior metrics show that few visitors reach the content beneath the fold, test elements or designs that alert users to scroll down. But if your visitors already view the entire page, testing such elements may be a waste of resources.

Also, research different personas and their behavior on your pages – if it differs, aim to micro-optimize the page for each type of visitor.

While the aggregated bird’s eye view is beneficial, never forget that every visitor counts, and always drill down to actual customer journeys to determine their intent. Watch session replays of their desktop and mobile interactions and focus on the events that are most closely linked to positive business outcomes.

Liftoff: During the test

The data-based A/B tester acts as Mission Control while the test is in progress. Instead of sitting around waiting for results, you can watch session replays immediately after the first visitors experience the variations to validate the funnels. This enables quick corrective actions when necessary, which reduces potential lost time and excess expenses.

Using this approach allows you to iterate quicker and achieve results faster. Visual evidence of actual user behavior provides insights that can be used to make decisions and correct the test mid-course.

Landing: After the test

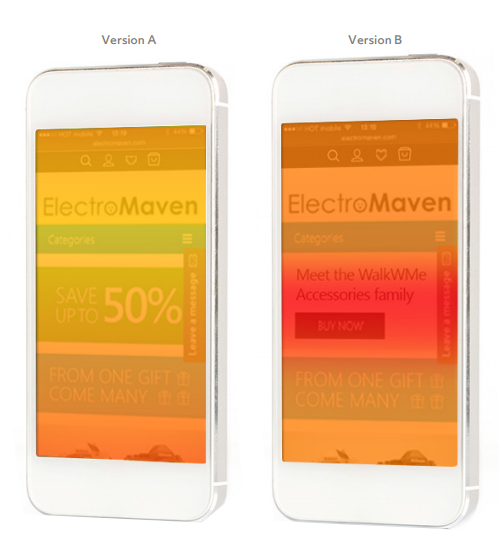

Even when the mission ends, there is still work to be done. Using data in post-test assessments can teach you why certain variables performed better than others. Beyond knowing that version B performed better than A, understanding the reason can help guide prioritization of changes to implement, as well as selection of future elements to test.

For example, by studying heatmaps side by side, you can learn which elements were not effective. In this example, the promotional banner pushed the more important call to action out of sight.

Equally (or possibly, more) important, after the test, having visual proof of results makes it easier to obtain buy-in for necessary changes. By integrating customer intent with A/B Testing data, you can markedly change the way your organization looks at web analytics as a whole.

The benefits of shuttle-like A/B testing

Regular A/B Testing provides answers to questions (like “which version of the page converts more?”), while data-based A/B Testing adds understanding to conundrums (like “why do more customers convert from this image than from another?”).

Regular A/B Testing is costly and time-consuming, and it is far from scientific. Each cycle requires you to hypothesize about which elements to test, then create the designs and implement them. Once that is done, it is a waiting game until statistical significance is reached. Then, the process needs to be repeated in multiple cycles.

A more efficient and effective way of performing split testing is to base the hypotheses on actual data and facts. Data-driven, “shuttle-like”, A/B Testing is:

-Objective in its choice of elements to test

-Based on small samples, accelerating to quick, accurate insights

-Actionable and engaging with analyses and customer behavior visualization

Regular A/B Testing may be somewhat data-driven during the testing phase, but data-based A/B Testing is completely data-driven before, during, and after the test.

The final frontier

Being grounded in data launches A/B Testing into a new era: one that is focused, powerful, actionable, accessible, effective, and impactful. At its core, data-based A/B testing ties the user experience ecosystem into the business decision process, making it intensive, quick, and profitable.