In 1984 the Queen of Rock, Tina Turner, recorded What’s Love Got to Do with It for her album Private Dancer. As you can easily guess, this is the inspiration for this article’s title.

My topic? Well, I am not into music criticism but industry analysis, which involves thinking about the impact of recent events.

Recent events, yeah, those are the Game of Thrones that happened at OpenAI: the New York Times suing OpenAI. Subsequently, OpenAI pledged that “it would be impossible to train today’s leading AI models without using copyrighted materia.” What is implied but not said directly is “for free.” The statement to the House of Lords does mention that OpenAI uses

“information that is publicly available on the Internet” and that it is working on offering an ability for creators to opt-out.

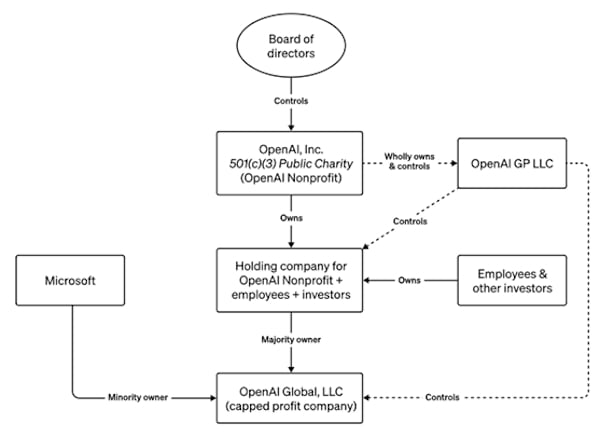

For the time being, OpenAI weathered the first storm; the new CEO is the old one and the board is (or will be) largely replaced by a group of people who are far more bullish on technology. Yet, the new setup is far from being finalized. I am sure that there will be more because one thing this new board is not: diverse. But then … diversity for diversity’s sake is not a good thing, either.

The outcome of the copyright storm is still open.

But what’s trust gotta do with it?

Short answer? Everything.

Long answer? Read on …

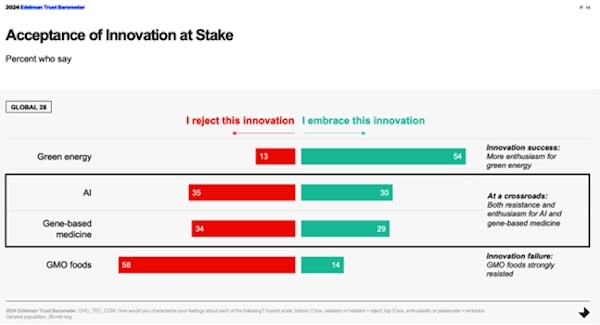

AI is at a crossroads. Numerous studies like the 6th edition of Salesforce’s State of the Connected Customer (free, registration required) or the just released 2024 Edelman Trust barometer show significant distrust for AI and in extension AI vendors. The jury is still discussing whether people trust or distrust innovation, although the same research surprisingly (at least for me) finds that people trust businesses most when it comes to introducing “innovations into society, ensuring they are safe, understood by the public, beneficial, accessible.” I guess the jury on this one is out, too, see the Game of Thrones.

This required trust in a technology needs to be considered on two levels, let’s call them the micro and the macro level.

On a micro level, each user of an AI system needs to be able to trust the system that it helps them do their job. A twist to this is that it must not patronize them at the same time or entice them into ceding their accountability to the system.

The macro level is that customers need to be able to trust their vendors. And this is doubly true in platform markets, where competition, hence choice, is vastly limited. Customers become dependent on vendors in platform markets.

A while ago, I published a post named If AI can’t be trusted, efficacy and efficiency won’t matter here in my column on CustomerThink. In it I wrote that the “right path [for companies to pursue] is where customers can and do trust companies that use powerful tools like generative AI, regardless of B2B or B2C business models.”

In extension, this is obviously also true for the vendors of said tools. What OpenAI did, and continues to do, is behave like the proverbial elephant in the china store — it breaks a lot of porcelain. And this “porcelain” is, you guessed it, trust.

Although the intention was very likely to stay true to the (board) mission and therefore to stay a trustworthy partner in an environment that straddled the conflict between “creating safe AGI that benefits all of humanity” and commercializing AI. As we all know, commercialization won out — which is not necessarily a bad thing. This all depends on how the commercialization works out and what the new role, positioning, and yes, population of the OpenAI board of directors is or will be.

What is a bad thing though, is that the trust in OpenAI as a vendor got damaged, and in in doing that, trust in the technology itself.

But wait, the story goes on!

Let’s talk a little about copyright. The stance that OpenAI (and other AI companies) take is, formulated drastically: Your work isn’t worth a penny, but mine is — and besides, you can opt out. This statement led to some interesting points being made in a LinkedIn discussion by Jon Reed and others. In a recent CRMKonvo, Jon Reed also maintained that the usage of data for training is not different from any other licensing question.

Beyond this important legal, question there is also the ethical one about the quality of the training data. Is it toxic in itself? Some datasets are.

All this, importantly, leads to the question of whether users of AI services open themselves up for copyright or other litigations when using these services.

Not to speak of implementing and deploying nefarious applications using AI technology.

Trust but verify!

What should businesses do now?

The above should by no means discourage the use of generative AI, or AI in general, for that matter.

On the contrary, especially, as I expect this year to become the year of truth. Vendors of AI tools will (need to) strive to show more value that gets created by buyers using their tools, and buyers should hold them accountable for their promises.

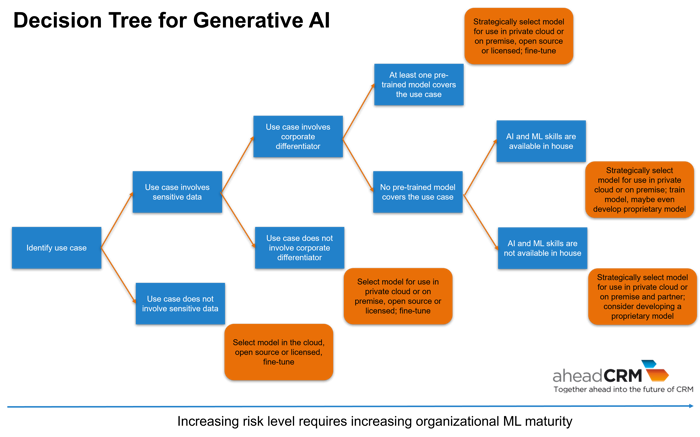

Still, not every vendor is created equal. Therefore, buyers need to carefully select use cases as well as partners to play with. Last, but not least, the new toy, err … tool, needs to fit into the existing IT strategy and landscape.

According to friend and colleague Jon Reed, customers will find themselves on a scale between over-aggressively pushing AI and holding off on any AI projects. These extremes can and should be avoided. That leaves the middle ground of carefully selected projects with carefully selected trusted partners, as Jon also suggests.

To support the decisioning process for this, I suggested this decision tree for use cases and the selection of corresponding models in an earlier article named “Generative AI: What’s Next and How to Get the Payoff“.

Secondly, it is important to get answers to the questions that I outlined in my earlier article If AI can’t be trusted, efficacy and efficiency won’t matter that I referenced above plus likely a plethora more detailed ones when it comes to a software selection that includes AI, in particular generative AI. Brian Sommer, who has LOTS of experience with software selection, recently gave some very good advice on what to ask in detail.

So, buyers, check who you do want to trust, select your projects carefully, and ask your vendors the right questions, the tough ones. Good, trustworthy partners, will share the risk with you. Take it from there.