Today thousands of surveys are being sent out, completed, tabulated, analyzed, and being put into presentations and on dashboards. Recommendations are being made. Executives are making decisions. Products are being green lighted or cancelled. Franchise agreements are being reviewed. People are being hired or fired.

Lives are being affected.

The problem with this, is that many times the data being collected is not just questionable, it’s just bad.

The sneaky thing about surveys is they have the appearance of objective quantitative information. You count the number of rabbits in the woods, that’s the number of rabbits that live there. If you take a randomly sample of M&Ms from a giant bag from your local Mega Mart, you can very accurately predict the proportion of the color of M&Ms in the entire bag…or perhaps even what is being produced at the factory.

The first thing to know is surveys aren’t counting objective things like M&Ms and rabbits. We are attempting to measure subjective states and quantify them. Satisfaction or affect is not something you can see in the earth or on the store shelf. There is error around all measurement in many forms. This “random error” in measurement is old news. As long as it is relatively reliable (i.e., consistent) we can live with a degree of error around validity or the “true” measure.

The larger boogeyman in survey research is straight up fraud; people intentionally misrepresenting who they are or what they think, feel, or believe to get a reward. This comes in three distinct flavors in the insight and CX world. Let’s take a look.

Flavor 1: Review Fraud

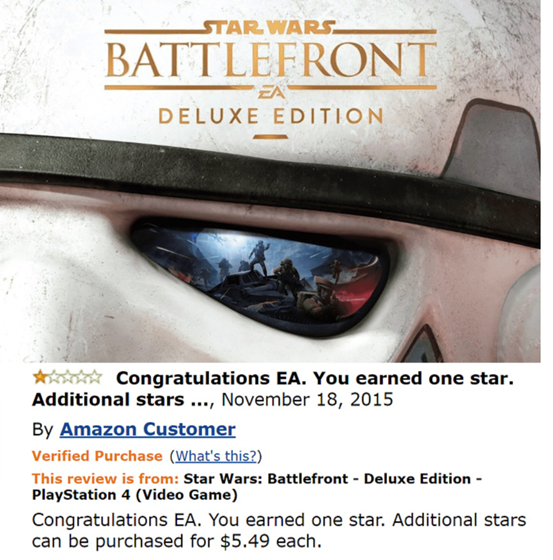

Ah, a golden oldie, that isn’t that old. In this scenario we have people providing fake reviews. It happens every day on Amazon, Trip Advisor, and other review sites. Why? Good reviews sell more stuff, bad reviews can put you out of business. Given that, there is strong motivation to have good reviews and there are plenty of companies that will oblige them with a short cut to get them.

While some “closed” systems such as Airbnb and Uber make it very hard to generate fake reviews …or even remove undesirable ones, other open systems are much easier to fake. However, it is not at unusual to see pandering and quid pro quo schemes for top box scores in closed systems as well. You can even find people on Fiverr and other sites to write fake reviews for you. While online retailers do their best to scrub and get rid of fake reviews they are out there. Some of them are very amusing.

That being said, fraud is serious business. If consumers don’t know what is fake or what is real, they will assume the whole review business has no integrity and will no longer use it for decision making. Some CX provider advocate just pushing positive reviews to review sites which is bad advice in that it again, erodes the confidence in the integrity of the review site.

Flavor 2: Survey Fraud in Customer Experience

Cheating has long been discussed and debated in the customer experience (formerly ‘customer sat”) world in post transactional surveys.

TThe scenario is this; you just bought a widget, and you received an email encouraging you to “tell us about your experience”. The idea is that if consumers provide feedback companies can improve products and services and help coach front line employees to do better.

Sounds good right?

Usually it is, but it can and sometimes does, go horribly wrong.

Unfortunately, NPS and other metrics can be used as a hammer rather than a diagnostic tool. In some contexts, such as call centers, hapless representatives’ jobs can be on the line to attain not only productivity goals, but also survey goals.

For the franchise owner the consequences for achieving or not achieving that score can be the difference between an all-expense incentive trip to Tahiti for exceeding the customer experience metric goals or potentially losing your franchise if you consistently don’t meet them.

As is well documented, when most people are confronted with changing behavior to improve the experience or influencing a score, they are going to go with the one that is easier to change. Sadly, that is too often trying to influence the score.

Channel partners have gotten very adept at ‘survey management’. There are even black-market manuals within instructions on how to do it well. It ranges from made up customers, falsifying surveys, completing surveys for customers, or ‘coaching’ customers for a particular score.

Years ago, I was told by a young salesperson that if I didn’t provide all top box about my experience that she would lose her job. When I explained that it was my company that conducted the study and what she was trying to do was a violation of their own policy, she looked at me and said, “No you don’t understand, those guys in that room will fire me,” and pointed to her sales manager.

It’s been my experience that the CX technology counter measures have been pretty good at weeding out cheats on the outbound and inbound part of the process. However, rampant survey ‘coaching’ is still very much alive and can negatively impact the very thing it is trying to improve, the customer experience.

This is still an issue in CX and why you should really evaluate whether you put incentives on your measurement program or not.

Flavor 3: Fraud in the Insights World

This takes us to the world formerly known as “Market Research” which is now more commonly called “Consumer Insights”. While there is plenty of good stuff going on in the insights world, there is a much-acknowledged problem which has to do with the rather seedy business of panel sample.

For those not familiar, panel sample consists of people who have volunteered to complete surveys in exchange for some form of reward or compensation. Sometimes this is points, sometimes this is cash. For at least 50 years this worked out just fine. Sure, it didn’t do a good job finding certain slices of the world (particularly rural males), but by and large it worked out pretty well.

Then the internet happened.

Suddenly, it was much more difficult to validate that these respondents were who they said they were, or more recently, even people at all. This created an opportunity for survey fraud.

While most people wouldn’t bother to cheat their way to a new toaster or for a few bucks, there are some who would. Those ‘some’ people tend to be the unemployed, the retired, the homeless, or those who are adept at using readily available VPN tools; someone who is not even from this country. Basically, anyone with a lot of time and not a lot of money. This is great if you are doing a study of retired people or the homeless, but this is a rather narrow and infrequently needed sample frame.

Sadly, the problem is rampant. While there are hundreds, if not thousands of providers, it is not an uncommon scenario when one panel providers gets into a pinch that they turn to ‘partners’ to help out.

At Curiosity we regularly throw out (and don’t pay for) at least 50% of our sample returns from most (not all) panel companies and this is after sophisticated upfront filters, survey traps, and inbound algorithms and rules are applied by those providers (and us).

Like the opioid crisis, this is a classic case of supply and demand involving three parties. First, we have panelist themselves. If they can find a way to quickly fill out many surveys for points or cash, they will and do. In fact, there are even bots you can use to automate the process. If you do this in high enough quantities you can make some modest money, even if half of your responses are thrown out. Anyone can register multiple times for panels often without vetting or detection.

Second are the panel sample companies. There is big money in sample and it’s a simple business. You do your best to estimate incidents and then charge a premium for lower incidence groups. Next you crank through sample. Millions and millions of records until you get what you need. When you are all done you just compensate valid completes and move on. It’s a low overhead, high volume business.

Most panel companies have a ton of documentation at a high level of their source and cleaning rules, but the level of transparency at the operational level is still lacking. Many times, its black box stuff, with many panel companies being a bit opaque about exactly where the sample comes from and exactly how they manage it. Trust us Jack, we will get your chicken nuggets, just don’t ask how we make them.

Finally, we have clients. If research providers say they are going to find 200 one legged accountant that enjoy reggae music they will do everything in their power to find them. When the sometimes naïve researcher finds out that actually those folks are hard to find or don’t exist, some clients don’t care. “Find them!”, they say. And this in turn rolls downhill. Panel providers eventually find them…but the sample quality is sometimes much less than stellar.

In the end, when things go off the rails it can result in have bad consequences. In the best case we are wasting money on fiction. In the worst case we are making important business decision based on fiction. It’s not good.

What to Do

So, are all panel companies horrible? No. There are few out there who do an incredible job of scrubbing sample. I have found, however, that once you get beyond remedial sample requirements, they struggle to meet the needs of researchers. They just don’t have the depth and reach. I just don’t think it is possible to create a jack of trades panel that can meet the needs of all industries equally. Also, out bound, in bound, and cheat counter measures only get you so far. This problem is just going to get worse with really good AI providing feedback.

So, should be just despair and give up? I don’t think so. There are a few things that can be done instead.

Customer Panels

Hand raisers of clients tend to be excellent quality. Although they are perhaps a bit biased (they are usually brand fans), they are also interested in helping the brand out. That means they are tough graders but pay attention to details. Creating your own panel isn’t easy and it isn’t cheap, but it is an excellent source of data.

Carefully Vet and Create an Industry Panel

If you are in the hat business, there is likely people out there that are really into hats of all kinds. Find them, recruit them, vet them, and treat them well. Again, this is not going to be cheap, but if you take the time to find people who are interested in your industry the data quality will be worth it. Also, these are hat enthusiasts, so there will be bias. I think the tradeoff is well worth it. It’s better to get 100 completes from a good quality sample than 10,000 completes from fakes and imposters. Also, you may want to entertain vetting based on articulation and curiosity.

In fact, Curiosity has developed a set of questions that help find people who are naturally inclined to provide higher quality feedback. Is this a bias sample? Yup. But so is every single form of sample out there…except for maybe the census which isn’t a sample at all.

Intercepts

Ok, so you are in a hurry to get stuff done and you don’t have time to horse around with creating a panel. Deploying some folks out there to conduct intercepts can be very effective if it a short survey. Interested in people’s opinions about a new hammer? Figure out a clustering sample plan and deploy some folks out in the field for a day. Again, it’s not cheap, but the data you are going to get back is going to be much higher than a hail Mary to the black box of panels.

Mixed Methodology

I have always advocated “triangulating on truth”. Not one methodology or one study is going to get you to the truth. If you use a mixture of customer, vetted panel, and qual it is amazingly effective.

Qualitative sometimes get a bad rap of being flaky and not scientific. While we can’t sales weight a focus group and make forecasts, the value you get from talking to people face to face is invaluable. Over the years I have come to trust qualitative as much, or even more, than quantitative work. You can’t argue with validity of someone sitting in their kitchen telling you why they hate the blender they bought and showing exactly why they hate it.

Observation

Heinz spent a ton of money just watching people use Ketchup. In particular, how young people use Ketchup. Over a several month project they hung out in fast food restaurants, amusement parks, cafeterias, and people’s homes to watch one thing; how people use their product.

Through this they realized a few things. First, people on average use about three to four packets. In a fast-food situation, they grab about 6 or 8 resulting in a large amount of waste. Secondly, they found that people would use Ketchup in two use cases: dipping their fries in or slathering it onto a hotdog or hamburger.

From this simple observation they create the award winning “Dip and Squeeze” Ketchup packet which has about the same amount of Ketchup as 3 packets and can be opened up at top for dipping or used to squeeze out Ketchup through the end.

Point being sometimes it is better to watch people than to ask them anything. In fact, some people may not be even aware of what they are doing at all. This act of careful and directed observation can be very powerful.

Imprison Panels?

So, should everyone stop using panels immediately? Should we stop surveying our customers after they visit the donut shop? Probably not, but I would encourage insights professionals to carefully evaluate the origin of their insights. Likewise, I would also encourage CX professionals to really ask if connecting incentives to survey scores is in their best interest. Fraud is a real problem in both the CX and Insights industry.

While surveys can be cheap to do…sometimes if it is too cheap to be true…it probably isn’t.

Cold Reality: Since the beginning of insight gathering, i.e. the first researcher’s shingle read “Og The Caveman Market/Customer/Employee/Brand Research”, there has always, unfortunately, been evidence of fraud. Nature abhors a vacuum and cheating is always lingering over qual and quant research results. Your post reviews this very well and also outlines how good, professional research and consulting organizations have, and can, put effective security guardrails in place – everywhere from quasi qualitative ethnographic studies to enormous panels – to reduce data compromise and enhance objectvity.

Great insights Dave. Early in my career, when I was in corporate marketing director, I hired a research firm to test market receptivity to a new product concept. The research company account executive actually asked me, “What do you want the data to say?” Similarly, a lot of the so-called independent research on the safety and effectiveness of Oxycontin came from studies paid for by…you guessed it…Perdue Pharma. Just two examples of research fraud used to fool unsuspecting consumers.

@chris and @michael, thanks such much for your comments and readership. Christopher, i had an exec once tell me “so we are going to find this in the research aren’t we Dave?” ….that was long ago…but still sticks with me

As you note, there’s still a lot of “gaming” the CX surveys by employees, either because of management pressure or financial opportunity. Walking past the array of action challenges represented by traditional CX metrics like NPS, CSat, and CES (https://customerthink.com/emerging_chinks_and_dents_in_the_universal_application_and_institutionalization_armor_of_popula/), not nearly enough has been done to keep employees from putting their thumbs on the CX research scale. I recall a post-delivery survey conducted, in-person, by an auto dealership sales person, who actually said to me: “On a scale of 1 to 5, where 5 is excellent and 1 is poor, please tell me if there is any reason you can’t give me a 5.”