Being a CRM consultant working with many organizations it is hard to not see AI as a topic that is thought about. Does it help? Where is the biggest impact? How to start? What skills do I need? These are only some of the questions that I hear on nearly daily basis.

All these questions have one thing in common: They are very operational, tactical.

Having had many talks and engagements about implementing AI for one purpose or the other I notice two trends about AI adoption.

- For one, in a large number of organizations this topic is IT driven.

- Second, the topic is treated with a technology mindset.

Sometimes there is an intent to set up an organisation. But again, the questions that I am most asked are about data, data scientists, and the systems and applications that shall be used.

Without any doubt, these are important questions. But they are not the only ones, probably not even the most difficult or most important ones.

There are many more questions, starting with the overarching strategy and the scope that shall be implemented, the business areas that shall be supported, and so on. It goes on with compliance and data protection topics, up to organisational ones and, very importantly, fears of the employees that are affected. In Germany, add the workers’ council, that will regularly have a word to say, too. At the end of the day, AI is always about automation and automation is about using fewer people to achieve the same. And yes, so far the prevalent opinion is that AI creates more jobs than it takes, but then the new jobs require another skillset than the ones that are automated away.

There are some ethical questions, too. One of the best known is the trolley problem that got enhanced by the MIT media lab for gathering a perspective on human ethics by having people decide on the lesser of two evils. If you don’t know it yet I can only recommend trying the emotional AI app that is part of the moral machine. It is a truly enlightening experience. Bias can be counted in the same category. An admittedly very extreme and obvious example for bias is Microsoft’s Tay, an AI bot that got re-trained by being released on Twitter, continuing to learn from its conversations.

What I take from this is that there are four classes of questions that need to get answered:

- Tactical-operational questions

- Strategic questions

- Compliance and security questions

- Ethical questions

These questions are related to each other and can get answered by applying the the 7S model that originally got developed by McKinsey.

Reality Check. The Challenges – An Example

Of these, the tactical-operational ones seem best understood, while the other three categories tend to be treated somewhat step motherly. As an example I can list a utility that approached me about implementing some AI scenarios. Their first idea was very tactical, implementing prototypes for AI driven chatbots to gain some experience. This approach clearly addressed some of the technical questions. However, they themselves figured out that it is too short.

They decided to implement an AI centre of competence (CC), which is a good idea.

And they are still asking for help, which is another good idea.

But!

The organization that is charged with building this CC is IT. This severely limits their scope. While being more than competent to work out solutions for questions about data, cloud strategy, computing resources needed or security, even data protection, they cannot look deep enough into questions like necessary changes of the organizational model, not to mention process or business models. Or how about the alleviation of employee fears? Will they exhibit the necessary culture and strength to go through its adoption to take full advantage of what they are about to pursue? This might be the material for an upcoming post – in a few years.

This is a truly exciting project that is also a good showcase for the overall need to address the mentioned four categories of questions.

Let’s have a look at how the 7S model can help.

Success Factors of an AI Transformation

So far I have talked about how difficult it is to implement AI in organizations. Seems impossible, right?

Although it is a daunting task that challenges every and any business, it is far from impossible to embrace the possibilities that AI technologies can offer. There are several models that support the necessary transition. One of them the 7S model that originally got developed by McKinsey, not as a model to implement AI but as a model to support transformational change.

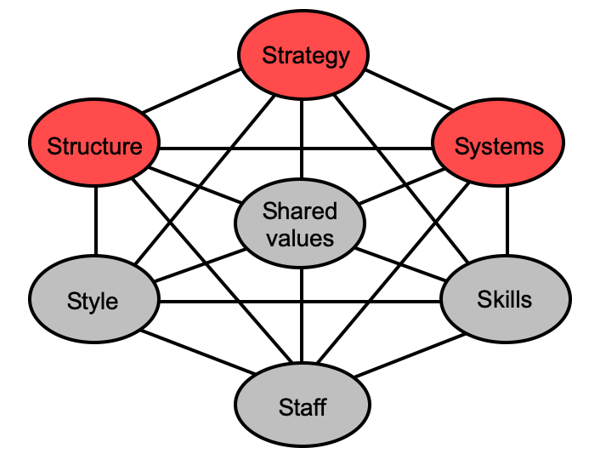

The McKinsey 7S model identifies seven success factors, three of them hard (in red), four of them soft. What the model says, is that these seven factors need to be in alignment and even mutually reinforce each other in order for an organization to sustainably succeed when implementing a transformational change.

As the seven factors are interrelated it shows what needs to be looked at and normally be realigned, too, if one of the elements is changed. Apart from technological, operational and strategic questions, the 7S model also covers the questions about compliance and ethics. It does so by combining soft and hard success factors. Ethics, for example, is part of the shared values and expressed by style and the staff that gets hired as well how people get trained. This is similar for compliance and security, which additionally can be supported or enforced by systems.

Applying it to embedding AI in a business it immediately shows that purely looking at systems and skills is not enough. The paradigm shift, that the implementation of AI in a company is, needs to address the other five factors, too.

Because all the factors are interconnected and form a kind of rubber band, a workable approach is to start with the hard success factors. The equally important soft factors provide limiting factors that help in keeping the overall company organization aligned.

Build a clear AI strategy that then can be used to create a portfolio of initiatives that can be worked upon using an already existing portfolio management process. It is important that this AI strategy is a part of the overarching corporate strategy and is governed using objectives, KPIs, and a supporting framework within the portfolio management process. Utilizing a think big – act small approach, results will be delivered fast and a quick realignment is possible.

Second, choose an organizational model that helps to fulfill the given strategy. There are a number of models that can be applied here, starting from forming a dedicated team to implementing an AI community of practice out of people with matching interests and professions or a parallel structure. To highlight the interdependent nature of the 7S model, the preferred organizational model to some extent depends on corporate culture, meaning style and shared values. The people to choose for driving the strategic topic depends on their skills.

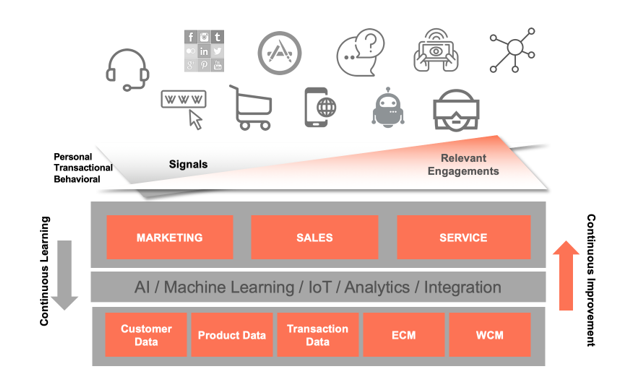

Third, based upon your chosen platform, it is necessary to select the AI systems that help most in solving current needs, and that offer the roadmap that supports upcoming requirements best. One of its core functions is to provide continuous learning facilities in order to enable a continuous improvement of engagements.

The keyword here is platform, because again this relates back to the staff and skills soft success factors, and of course to the hard strategy factor.

Again, the choice of platform has an impact on staff and skills.

All success factors have an impact on the other ones. Changing one requires an alignment of the other ones.

The 7S model exemplarily shows that it is not enough to look at transformational projects purely from the point of view of one department.

As there are a lot of dimensions involved in incorporating AI in a company the 7S model also enforces the mindset of thinking big while acting small to continuously deliver success.