This is the second of my series of three posts discussing several issues that can affect the validity of survey findings and/or the credibility of survey reports. As I wrote in my last post, surveys and survey reports have become important marketing tools for many kinds of B2B companies, including those that offer various kinds of marketing-related technologies and services. So, many B2B marketers are now both producers and consumers of survey-based content.

Whether they are acting as producers or consumers, marketers need to be able to evaluate the quality of research-based content resources. Therefore, they need a basic understanding of the issues that can impact the validity of survey results and make survey reports more or less credible and authoritative.

This post will discuss an issue that can easily undermine the credibility of a survey report. My next post will focus on some of the issues that can affect the validity of survey findings.

The "Sin" of Unjustified Conclusions

An essential requirement for any credible survey report is that it must only contain conclusions that are supported by the survey data. This sounds like basic common sense - and it is - but unfortunately too many survey reports include express or implied conclusions that the survey findings don't actually support. In my experience, most unjustified conclusions arise from blurring the lines between correlation and causation.

One of the fundamental principles of data analysis is that correlation does not establish causation. In other words, survey findings may show that two events or conditions are statistically correlated, but this alone doesn't prove that one of the events or conditions caused the other. Many survey reports emphasize the correlations that exist in survey data, but few reports remind the reader that correlation doesn't necessarily mean causation.

The following chart provides an amusing example that illustrates why this principle matters. The chart shows that from 2000 through 2009 there was a strong correlation (r = 0.992568) between the divorce rate in Maine and the per capita consumption of margarine in the United States. (Note: To see this and other examples of nonsensical correlations, take a look at the Spurious Correlations website.)

I doubt any of us would argue that there's a causal relationship between the divorce rate in Maine and the consumption of margarine despite the strong correlation. These two "variables" just don't have a common-sense relationship.

But when there is a plausible, common-sense relationship between two events or conditions that are also highly correlated statistically, we humans have a strong tendency to infer that one of the events or conditions caused the other. This tendency can lead us to see a cause-and-effect relationship in cases where none actually exists.

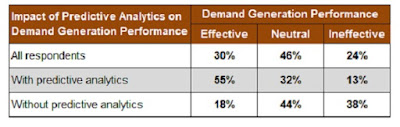

Here's an example of how this issue can arise in the real world. A well-known research firm conducted a survey that was primarily focused on capturing data about how the use of predictive analytics impacts demand generation performance. The survey asked participants to rate the effectiveness of their demand generation process, and the survey report includes findings (contained in the following table) showing the correlation between the use of predictive analytics and demand generation performance.

Based on these survey responses, the survey report states: "Overall, less than one-third of the study participants report having a B2B demand generation process that meets objectives well. However, when predictive analytics are applied, process performance soars, effectively meeting the objectives set for it over half of the time." (Emphasis in original)

The survey report doesn't explicitly state that predictive analytics was the cause of the improved performance, but it comes very close. The problem is, the survey data doesn't really support this conclusion.

The data does show there was a correlation between the use of predictive analytics and the effectiveness of the respondents' demand generation process. But the survey report - and likely the survey itself - didn't address other factors that may have affected demand generation performance.

For example, the report doesn't indicate whether survey participants were asked about the size of their demand generation budget, or the number of demand generation programs they run in a typical year, or the use of personalization in their demand generation programs. If these questions had been asked, we might well find that all of these factors were also correlated with demand generation performance.

The bottom line is, when marketers conduct or sponsor surveys, they need to ensure that the survey report contains only those claims or conclusions that are legitimately supported by the survey data. And as consumers of survey research, marketers must always be on the lookout for unjustified conclusions.

Top image courtesy of Paul Mison via Flickr CC.