Is the new iPhone better than the latest Android phone? Ask 10 people and you’ll get 10 different answers — all of them based on opinion.

It’s similar with customer feedback metrics. Should you use Net Promoter or Customer Effort Score or Customer Satisfaction or some other new fad metric?

The opinions vary widely based on the last blog the person has read.

The good news is that an excellent new paper from the University of Groningen sheds some light on which is really better based on empirical research. That’s right, actual science.

What’s nice about this paper is that the researchers don’t seem to have an axe to grind. They don’t work for one of the competing consulting firms that promote this question or that question. Hopefully that makes their research more balanced.

They also look to have done their work very thoroughly, with good sample sizes and methodologies. They controlled for a variety of factors including duration as a customer.

I’m not qualified to assess the statistical approaches they used as they are ahead of my knowledge in this area but have assumed that they are appropriately used.

The Focus of the Research

The paper is aptly titled: “The predictive ability of different customer feedback metrics for retention” and is freely available on line for review.

In it, through a series of surveys and advanced statistics, the authors investigated:

- The predictive power of a range of customer feedback metrics

- Across industry, firms and individual customers

Summary Of Findings

You can read the whole paper for the details but I’ve pulled a few keys items that I found interesting.

NPS Is an Effective Predictor of Customer Retention

With our findings, we can state that, in contrast with previous research (e.g., Keiningham, et al., 2007b), monitoring NPS does not seem to be wrong in most industries. Our findings indicate that the NPS is an effective predictor of customer retention, though the top-2-box customer satisfaction is slightly better overall.

(emphasis mine)

and:

…”changes in top-2-box customer satisfaction, followed by the official NPS, have the highest impact on customer retention”

Much has been made of the work of Keininhgam, et al. in “proving” NPS is not predictive. This comprehensive research rejects that conclusion.

Customer Effort Score is Not Effective

Yet, by quite a large margin, CES is still the worst-performing CFM. Thus, even for the group for which the CES was designed, it is still outperformed by the other CFMs.

While it may be a great idea, the Customer Effort Score metric is less effective than all of the other metrics analysed.

The authors go on to say:

In terms of the limited overall incremental value of the CES in itself, managers should be reluctant to adopt any metrics that have a past focus and are limited in focus on one specific attribute and/or incident as an overall key performance metric.

Greater Engagement Leads to Greater Retention

“indicating that people who make requests are more likely to remain customers.”

Generally, customers that interact with our brands are more likely to remain customers than those that don’t. The practical implications of this finding are difficult to determine but any way that you can continue to engage with your customers would seem to be a good thing.

Linear Scales Doesn’t Work as Well as Segmented Scales

In general, transforming scales of the CFMs [Customer Feedback Metrics] to capture the proportion of most satisfied customers (as is done with the top-2-box customer satisfaction) or splitting customers up into groups (as is done with the promoters and detractors of the NPS) is preferable to using the full scale of the CFMs.

The link between customer responses and customer retention is not linear and when analysing responses it is necessary to focus on the ends of the scale. The simple customer satisfaction score is not as good an indicator as top-2-box scores. The Net Promoter score is a better indicator that the unscaled response to the “would recommend” question.

Also:

We clearly find that the top-2-box customer satisfaction and the official NPS, which focus on the extremes, outperform the CFMs that use the full scale.

Combing Metrics Can Lift Your Accuracy.

In this case they are not suggesting adding top-2-box customer satisfaction to Net Promoter Score but simply tracking reporting on both indicators.

Combining metrics, especially the CES with the customer satisfaction–related CFMs, results in improved out-of-sample retention predictions. A dashboard of CFMs that measure different dimensions, as indicated in our conceptualization, is preferable to monitoring a single CFM.

Top-2-Box Customer Satisfaction and NPS Tie As the Best Metric to use

The weights reveal a 49.6% certainty that the top-2-box customer satisfaction is the best CFM and a 49.1% certainty that the official NPS is the best CFM; thus, these two CFMs are almost equally likely to be the best CFM, and it is very unlikely that one of the other CFMs is the best.

NPS Is Best for Identifying Risky Customers

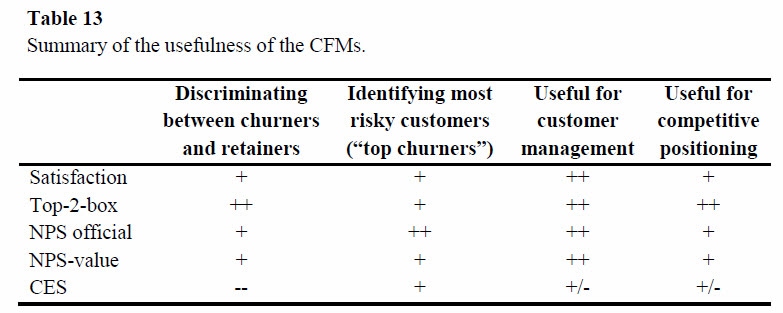

The authors kindly provided a handy dandy look up table for which metric to use when.

In summary the only two metrics you should be focusing on are top-2-box customer satisfaction and Net Promoter Score.

Having literally “written the book” on customer retention over 20 years ago (http://www.amazon.com/Customer-Retention-Integrated-Process-Customers/dp/0873892577) to compare retained customers to satisfied ones, this metric, while helpful, tells little about the level and intensity of customer purchase behavior, apart from the fact that the customer remained with the supplier. The customer can, as has been extensively proven, have a broad brand consideration and purchase set; and retention will reveal little about actual share of customer for the individual brand.

Satisfaction has been proven to have very little connection to customer behavior, for example in analysis by Fred Reichheld/Bain. Their work showed 0.00 correlation between change in ACSI scores for the companies surveyed and actual sales change. As for NPS, Tim Keiningham’s most recent research has shown similar non-connection to actual customer action: http://customerthink.com/want-or-need-higher-customer-satisfaction-loyalty-and-recommendation-scores-the-real-question-is-why/

As for Customer Effort Score, the University of Groningen results you’ve cited are not at all surprising (http://customerthink.com/is-there-a-single-most-actionable-contemporary-and-real-world-metric-for-managing-optimizing-and-leveraging-customer-experience-and-behavior/)

Michael, I agree with you

Adam, the important thing is what are we measuring?

Effort is only part of the buying or use experience. India required a great deal of effort and everyone said the difficulty stopped them from investing. When the markets opened up and started to grow companies entered to make money, and no one complained of the effort

NPS measures re-purchase intent, and therefore has a correlation with loyalty. It does not measure why the re-purchase intent is there (no diagnostic).

Satisfaction measures transactions, and are fleeting. You could be dissatisfied and continue with your supplier or very satisfied with a transaction but never again buy from the supplier. You can see examples from your own experience

People buy or do not buy because they perceive they get better value from one supplier vs the other. This is the Customer Value added measure, which has been proved to predict market share and a leading indicator of the market share (Vodafone CEO said they could predict market share a quarter out using CVA data to within 1% accuracy)

I would question whether this is even a worthy discussion! NPS may prove to be more accurate than CES on the whole supported by empirical evidence; however you could also argue that the two measures do not ‘compete’ 100% with each other.

The nature of the industry, company and/ or product have an essential role to play here.

For example, you would not ask NPS if you were a provider of funeral services, as it would not be wholly appropriate. CES may however be more appropriate in this instance. Of course this is an extreme example to illustrate the point.

NPS is more relevant for a company with a clearly defined and differentiated proposition in a competitive market sector. Mercedes, Apple or Sears might legitimately ask this question.

CES may be more appropriate for an undifferentiated/ ‘vanilla’/ un-engaging/ price-led proposition – the provision of gas/ electricity services is not something of real interest to customers and they just want to get things done as quickly and easily as they possibly can. Their customers are not likely to even contemplate ‘recommending’, as it is not a brand that most people can relate to or engage with.

I personally cannot see the issue with just using both together as part of a wider CSAT or VoC exercise. I’m not a big fan or transactional NPS or CES, as I feel that they are both contrived. Combining metrics seems wholly logical to me. Why does it have to be one thing or another?

Adam, thanks for sharing highlights from this study, and providing a link. Very interesting findings from this Dutch study.

The research seems exceptionally well done, although I found it challenging to follow all of the statistical arguments.

The data is from Dutch consumers evaluating service firms in 2010 and 2012. Like all research, the findings shouldn’t be extrapolated to other markets unless they behave like the sample.

That said, I’m not surprised at all by the findings. I’ve been following the CLM (Customer Loyalty Metric) debate for nearly 15 years, and have done some of my own research on what business people are using.

It’s interesting how hard the loyalty industry and enterprising consultants keep working to find a better metric that CSAT. In the pre-NPS days, Reichheld used Enterprise Rental Cars as a leading example. What did Enterprise use? Top Box CSAT. Other academic research (notably Morgan & Rego, 2006) has found the “best” overall CLM is CSAT.

Still, all of this is of mostly academic interest. Because in my most recent benchmark study of customer-centric leaders, I found no difference in how business performance Leaders used CLMs vs. Laggards. None.

On average, both Leader and Laggard companies use 3 different metrics. And what were the 3 most commonly used CLMs? CSAT, NPS, and Likelihood to Recommend. Only 5% of companies are using just one metric.

Far more important in distinguishing Leaders fromLaggards was a bias to act on feedback, along with a number of other practices in my new report Leadership Practices for Customer-Centric Business Management.

Thanks for the comments everyone — seems like a lively discussion topic.

Michael — it would be better if the read the post and/or the associated supporting material before commenting. The research, and my post, points out that average CSAT is not well linked to future purchases but top two box CSAT is quite a good predictor.

Guatam — you are correct that neither NPS, nor CSAT, nor any other single point measure can be diagnostic. The goal, typically, for these measures is as a proxy for loyalty that when combined with other, diagnostic, data can be used to improve the business in meaningful ways.

Ian — I think the main problem with CES is that while ease is typically important it is not broad enough to be a good predictor of loyalty. I know from the many studies we have done for our clients that there are several common predictors: ease, reliability, responsiveness, along, typically, with some industry specific vectors. NPS and, as this study indicates, top two box CSAT are quite good proxies for loyalty but CES is not.

Bob — thanks for your considered comments. I too found the stats challenging. You are correct that the “which is better” debate is somewhat academic if companies don’t actually use the data to make change in their business. Doing something, almost anything, would be a better investment for most companies than arguing the last 1% on which metric is technically best.

Adam – I guess the whole thing is academic, as there is nothing stopping people from using more than one measure at once. I would encourage it and it seems that you would too.

Do bear in mind that we can make statistics say many different things. I’d be interested to understand more about the way the analysis was done. With different types of segmentation of data, maybe not by industry but by another dimension, you may find that CES is a good predictor of loyalty for undifferentiated/ ‘vanilla’/ un-engaging/ price-led products, sectors and businesses.

I have been a customer of the same bank for 20 years as they give me a great experience because of ease and reliability. I would also recommend them. In this instance, CES and NPS scores from me would be high.

But if you asked me to answer the same questions about having kids, the NPS would be high but the CES would be low!! The same might apply to White Water Rafting!

It all depends. I just don’t think that the two scores are directly interchangeable.

🙂

If by future purchases you mean customer retention, as discussed in your post and response, then my comments stand. What was stated, and will be repeated, is that satisfaction says relatively little about the intensity of customer behavior. The finding that top two box CSAT correlates to (not causes or drives) retention is not very robust or definitive. Again, customers can, and often do, have broad purchase and consideration sets. They can even be trapped into staying with a vendor, while reducing the amounts purchased. So, retention is a very low bar for performance. CSAT, whether mean, top two box, or even top box, frequently does not connect well to share of customer, in part because it is too passive, tactical, transactional, reactive and attitudinal to be truly actionable at a granular, consistent, and universal level..

Ian – Honestly, I don’t have the background to explain or validate the stats in the paper as they are more advanced that I have used. However, I would encourage you to review the source paper for more details on the procedures used. As Bob says in his comment, it is an unusually thorough process, making the paper worthwhile to review in detail.

First, I want to thank you Adam for highlighting Evert de Haan’s, Peter C. Verhoef’s, and Thorsten Wiesel’s research in the International Journal of Research in Marketing. Your conclusion that this vindicates the Net Promoter Score (NPS), however, is definitely overstated. I am not aware of any serious scientific research that stated NPS could not be tied to customer retention. In fact my own research, i.e. “The Value of Different Customer Satisfaction and Loyalty Metrics in Predicting Customer Retention, Recommendation and Share-of-Wallet” showed that NPS classifications linked to retention in 2007.

The main scientific papers testing NPS focused its claimed “superiority” to other metrics, and its claimed linkage to growth. Therefore, I find your assertion that the research of de Haan, Verhoef, and Wiesel in any way challenges the findings of, “A Longitudinal Examination of Net Promoter and Firm Revenue Growth,” that I also coauthored disturbing. Specifically, my coauthors and I very clearly state the purpose of the research (p. 41):

“The purpose of this study is to examine the research and findings regarding Net Promoter (Reichheld 2003; Satmetrix 2004). In particular, we attempt to replicate these findings using a methodology that corresponds to that which Reichheld (2003, 2006c) and Satmetrix (2004) use. To date, no longitudinal, peer-reviewed, cross-industry examinations have been conducted on this specific Net Promoter metric. Therefore, rather than establish a set of theory-based hypotheses, as is common in most scientific investigations, we test the overarching claim regarding Net Promoter—namely, that Net Promoter is the “single most reliable indicator of a company’s ability to grow” (Netpromoter.com 2006).”

It would be hard to argue that this investigation did not do exactly what it claimed to do. Moreover, the main findings of this research were validated in a 2013 study reported in the International Journal of Research in Marketing by Van Doorn, Leeflang, and Tijs. Specifically, they find (p. 314)

“We find that all metrics perform … equally poor for predicting future sales growth and gross margins as well as current and future net cash flows. … the predictive capability of customer metrics, such as NPS, for future company growth rates is limited.”

With regard to the ability to link NPS (or customer satisfaction, etc.) to customer buying behavior, the problem is not retention. It is that these metrics do not link to share of wallet. In most industries, far more customers shift their spending among the brands that they use in a category than completely defect. Unfortunately, research that I coauthored with a team of leading academics finds that changes in NPS or satisfaction explain less than one-half of one percent of changes in share of wallet (Keiningham et al 2015: Figure 4 and Table AIII). This is beyond awful.

Moreover, it’s very easy to prove that NPS, satisfaction, and most commonly used loyalty metrics are VERY weakly correlated to share of wallet. In fact, my coauthors and I implore readers of our book, The Wallet Allocation Rule, “Please don’t take our word for it—prove it for yourself using simple spreadsheet software.” Fortunately, this is very easy to do (as we show in the figure in this article, which comes directly from the book: https://www.linkedin.com/pulse/how-really-grow-share-timothy-keiningham).

Finally, I want to address your comment:

“What’s nice about this paper is that the researchers don’t seem to have an axe to grind. They don’t work for one of the competing consulting firms that promote this question or that question. Hopefully that makes their research more balanced.”

I don’t know any serious scientific researchers that would allow their reputations to be compromised by their occupations or from having an axe to grind. In academic research, your reputation is all you’ve got. And there is no coming back from being proved a liar or shill. Therefore, if you are referring to anyone in particular, you need to call the individual or individuals out by name instead of making unsupported allegations. With regard to my own work, I always work with academic colleagues of the highest integrity and capability as coauthors as nothing is more important than reporting the truth.

Again, thank you for highlighting the fine scientific research of Evert de Haan, Peter C. Verhoef, and Thorsten Wiesel.

REFERENCES

Timothy Keiningham, Lerzan Aksoy, Luke Williams, with Alexander Buoye (2015), The Wallet Allocation Rule: Winning the Battle for Share, Hoboken, NJ: John Wiley and Sons.

* New York Times and USA Today bestseller

Keiningham, Timothy L., Bruce Cooil, Lerzan Aksoy, Tor Wallin Andreassen, and Jay Weiner (2007), “The Value of Different Customer Satisfaction and Loyalty Metrics in Predicting Customer Retention, Recommendation and Share-of-Wallet,” Managing Service Quality, vol. 17, no. 4, 361-384.

* Winner of the Outstanding Paper (Best Paper) award from Managing Service Quality.

Keiningham, Timothy L., Bruce Cooil, Tor Wallin Andreassen, and Lerzan Aksoy (2007), “A Longitudinal Examination of Net Promoter and Firm Revenue Growth,” Journal of Marketing, vol. 71, no. 3 (July), 39-51.

* Winner of the Marketing Science Institute/H. Paul Root Award for the paper that had the most significant contribution to the advancement of the practice of marketing.

Keiningham, Timothy L., Bruce Cooil, Edward C. Malthouse, Alexander Buoye, Lerzan Aksoy, Arne De Keyser, and Bart Larivière (2015), “Perceptions Are Relative: An Examination of the Relationship between Relative Satisfaction Metrics and Share of Wallet,” Journal of Service Management. vol. 26, no. 1, 2-43.

Van Doorn, Jenny, Peter SH Leeflang, and Marleen Tijs (2013), “Satisfaction as a Predictor of Future Performance: A Replication,” International Journal of Research in Marketing. vol. 30, no. 3, 314-318.

Tim – thanks for providing a thoughtful and reasoned response.

Just to pick up on a couple of points.

With respect to challenging the findings of your co-authored 2007 paper, I am simply reflecting the views of the writers, as quoted in my extract.

On the topic of having axes to grind: this is of relevance in all areas of scientific research from sports drink companies sponsoring athlete performance studies to diet advice research sponsored by food manufacturers. In this case I simply seek to state that as this research is performed by an apparently arms length group of people so is more free from the perception of taint than research performed by organisations closely aligned to one cause nor another. If you believe the wording in the post does not convey this I’m happy to adjust it.

Finally, I would be interested in your view of the source paper itself and how it interacts with the papers you have published.

Hi Adam,

To be clear, my problem isn’t with the research of de Haan, Verhoef, and Wiesel. While they overstate the conclusions of my coauthors’ and my work by writing “in contrast with previous research (e.g., Keiningham, et al., 2007b), monitoring NPS does not seem to be wrong in most industries,” your statement regarding our work AND the conclusion of de Haan et al.’s work is completely wrong.

First, our research made clear that in no way could NPS be classified as “the single most reliable indicator of a company’s ability to grow” (as was claimed). Moreover, it embarrassingly disproved claims by its creator that 1) satisfaction was a much worse predictor than NPS, and 2) the relationship between the American Customer Satisfaction Index and growth had a 0.00 correlation, and it did so using the some of the same data that was used to demonstrate the strength of NPS in the book The Ultimate Question. That was the sole purpose of the research, and it proved its case conclusively.

These findings, however, likely led many in the scientific community to view NPS as all hype. Given the over the top claims attributed to the metric when it was introduced, and its derisive dismissal of satisfaction as a metric (despite overwhelming scientific evidence to the contrary), it is easy to understand why. As I noted in my earlier response, there is no going back with the scientific community once your credibility is gone. [You must admit that it is ironic that the argument has gone from “it’s the single most reliable indicator” that is much better than satisfaction to, “NPS is almost as good as satisfaction in predicting retention” as a validation for the metric.]

Again, regarding my coauthors’ and my research, we never said NPS did not link to retention. We said it wasn’t superior to other metrics. And every subsequent peer reviewed study of which I am aware has supported this finding (including the research of de Haan, Verhoef, and Wiesel).

Your statement, however, draws inferences that cannot be made by de Haan et al.’s research, nor that of my coauthors and me. Specifically, you write: “Much has been made of the work of Keiningham, et al. in “proving” NPS is not predictive. This comprehensive research rejects that conclusion.”

First, our work did not say “NPS is not predictive,” nor did de Haan et al. say this about our work. Second, de Haan et al. did not reject the conclusions of our work. In fact, they did not even examine the topic of our work.

Regarding “axes to grind” and “sponsorships” impacting scientific research, it is important to note that this topic is one that is seriously addressed in the scientific community. With regard to our field of study (primarily the fields of marketing and service management), there isn’t a lot of “sponsored” research. Moreover, authors in the most prestigious journals must indicate such sponsorship when submitting publications to these journals.

Now, specifically to the peer reviewed scientific research on NPS, do you have any evidence indicating that occupation, sponsorship, axes to grind or other potential biases impacted the investigations in any way? This is a serious accusation. I know most of these authors personally, and have no reason to doubt their scientific integrity and relentless quest for the truth. As allegations of scientific wrongdoing damage the public’s faith in the entire field, if there is reason to believe that this is the case then we need to know. And if there isn’t, then we need to avoid casting doubt on the scientific literature when there is no evidence.

Additionally, you ask for my “view of the source paper itself and how it interacts with the papers you have published.” My personal view is that this is very good research in a very good journal. It confirms what we have known for a long time—namely that the impact of satisfaction (and other perceptual metrics) on customer retention is nonlinear and asymmetric. Believe it or not, the Return on Quality work I did with Roland Rust and Tony Zahorik in 1994 and 1995 said exactly that. Moreover, The Customer Delight Principle that I wrote with Terry Vavra said the same thing.

As for where this paper fits within the literature on NPS and customer satisfaction, I believe that it is a reminder of the importance of keeping customers highly satisfied in order to ensure their retention. Clearly, having customers who are highly satisfied and willing to recommend the brand is a good thing.

In closing, I believe that the focus on metrics is misplaced. My research with several leading academics reveals that the primary problem isn’t the choice of metric (e.g. satisfaction, NPS, etc.). Rather, it is the way these metrics are measured and analyzed. My coauthors and I make this crystal clear in our book, The Wallet Allocation Rule. Specifically, it’s not the metric or the score you get from using it that matters. What matters is the relative rank that this score corresponds to vis-à-vis competing brands the customer also uses. To quote Bob Thompson’s endorsement of the book, “After years of arguing about which metric is best, this groundbreaking [research] reveals what really matters: how your brand compares to your competitors’ in your customer’s mind.” It is my hope that we can move from a focus on the finding the “best” metric to systems that truly identify why customers divide their wallets among competing brands.

Best…

Tim

REFERENCES

Keiningham, Timothy, Lerzan Aksoy, Luke Williams, with Alexander Buoye (2015), The Wallet Allocation Rule: Winning the Battle for Share, Hoboken, NJ: John Wiley and Sons.

* New York Times and USA Today bestseller

Keiningham, Timothy L., Bruce Cooil, Tor Wallin Andreassen, and Lerzan Aksoy (2007), “A Longitudinal Examination of Net Promoter and Firm Revenue Growth,” Journal of Marketing, vol. 71, no. 3 (July), 39-51.

* Winner of the Marketing Science Institute/H. Paul Root Award for the paper that had the most significant contribution to the advancement of the practice of marketing.

Keiningham, Timothy L., and Terry G. Vavra (2001), The Customer Delight Principle: Exceeding Customers’ Expectations for Bottom-line Success. New York, NY: McGraw-Hill/American Marketing Association.

Rust, Roland T., Anthony J. Zahorik, and Timothy L. Keiningham (1994), Return on Quality: Measuring the Financial Impact of Your Company’s Quest for Quality. Burr Ridge, IL: Irwin Professional Publishing.

Roland T. Rust, Anthony J. Zahorik, and Timothy L. Keiningham (1995), “Return on Quality (ROQ): Making Service Quality Financially Accountable,” Journal of Marketing, 59 (April), 58-70.

* Winner of the Alpha Kappa Psi Foundation Award (best paper) for the article making the most significant contribution to the advancement of the practice of marketing. (The award was later renamed the Marketing Science Institute/H. Paul Root Award).

Whenever this much dust is kicked up about an issue, my reference is invariably the statement that Jaggers, the lawyer in Dickens’ Great Expectations makes to Pip, the novel’s protagonist: “Take nothing on its looks; take everything on evidence. There’s no better rule.” Tim Keiningham’s evidence on NPS is very thorough, clear and compelling, as are his two explanations here. Re. other metrhics, CES has multiple analytical and application challenges. In macro terms, CSAT, which is a superficial and tactical measure, connects to attitudes resulting from customer-supplier interaction, but it does not provide as much guidance about behavior or potential behavior. However, dissatisfaction (in part because it drives stronger emotions and memories) does connect to downstream customer action.

Bob Thompson, Ian, Gautam, Tim and I appear to pretty much agree that the acid test of any performance metric, or set of metrics, is the validity, consistency and reliability of application to key customer experience elements. If there is evidence that the metric(s) have solid actionability, there’s no better rule.

Great discussion. I recommend checking out a post by Evert Haan and Peter Verhoef about their research that is referenced here. Here’s a link to the post on CustomerThink http://customerthink.com/how-valuable-are-the-net-promoter-score-and-other-customer-feedback-metrics