One of the first steps most B2B tech companies take on their Customer Experience journey is to implement a CSAT program. CSAT programs are easy to implement and provide hard metrics on how your Customer Support, Training, Professional Services, etc. are doing.

If your program is like most, it likely consists of sending an email to customers after each event (Support case closed, Training class completed, etc.) to get a satisfaction score for the experience and then maybe ask a couple of more questions.

Limitations of CSAT for B2B Tech Companies

While on the surface it would seem like CSAT would be a very good way of measuring functional satisfaction, for B2B tech companies, it has a number of significant limitations.

1. B2B tech customers opinions are based on the totality of the experience, not the individual events that CSAT measures

A customer may have given a training class a high mark, but the true value doesn’t become clear until they have actually put their training to work. They may have walked out feeling pretty good, but only later figure out that the class didn’t adequately prepare them. Or, they may have had a great class, but that the vendor lacks the supporting content (knowledgebases, online training, documentation) that they need to build on the class and do their job.

When it comes to Support, a customer may have had 9 good support experiences, but a poor experience on the 10th call, which was the one that mattered the most to them. While CSAT would record a 90% success rate, in fact, your customer could be very dissatisfied. The inverse is true as well. If you nail that Severity 1 case, customers could have a very positive view of support even if they had a couple of mediocre experiences on less important cases.

CSAT Can Provide Misleading Data on How Customers Truly Feel About Support

2. CSAT only measures the experience of customers’ frontline employees and does not include the manager and director level

It isn’t that the opinions of the front line doesn’t matter, but the opinion of the managers and directors who are typically the champions, is critical. While they may not have direct involvement, they know how things are going and can put it into the context of the complete relationship, which front line staff typically cannot do. As one manager commented, “We’ve been very happy with <Vendor X>’s onboarding. I wasn’t the one who went to training, but believe me, I would have heard if it didn’t go well and I would be well aware if they didn’t know how to use <Product Y>.”

3. CSAT is a wasted opportunity

When it comes to getting customers to give feedback, it is the number of times you ask, not the number of questions you ask, that drives response rates. Once they are taking a survey, customers are generally fine taking 5 to 10 minutes to answer questions. When you just ask 1 or even a few questions, you’ve used up a critical client interaction to learn very little.

A New Approach to Customer Feedback

Due to these limitations, we recommend that our clients revamp their customer feedback program around the following four principles:

1. Send surveys quarterly or semiannually

By reaching out less often, you’ll get a higher response rate when you do send out a survey.

2. Send longer, two-tier logic-driven surveys

Once you get customers to engage, use the opportunity to get more information. In one survey, you can ask about NPS, all of your functional areas, product priorities, their purchase decision (why you and not competitors), and more. To accommodate customers who only want to answer a few questions, we recommend a two-tier approach where customers answer about 5 must-have questions and then are asked if they are willing to answer a few more. On average, about half of customers will go on and answer the full survey.

Two Tier Surveys Maximize Client Feedback by Providing Customers an Option to Take a Short or Long Survey

3. Ask satisfaction at the function level on a 1 to 5 scale

As described above, you’ll want to ask “How satisfied are you with <Support, Training, Onboarding>?” not how satisfied they were with an individual event. These function level scores provide a much more accurate picture of your customers’ experience than individual event scores.

- We recommend a 5-point scale. Larger ranges do not provide additional insight as customers tend to gravitate to the top, bottom and middle

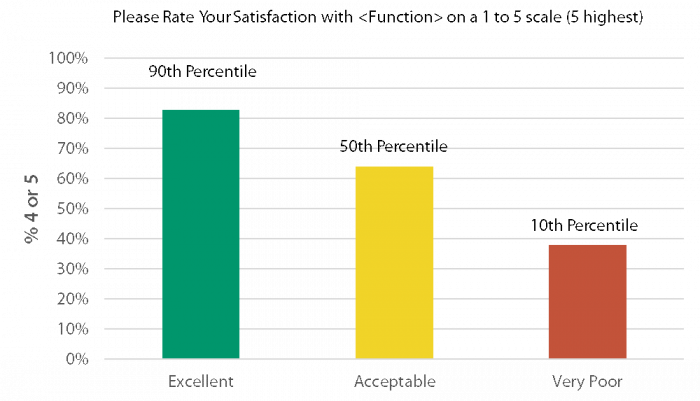

- Scores are evaluated as a % rating the function 4 or 5 and can be compared to Topline’s benchmarks based on over 5,000 survey responses so you can evaluate your performance vs. other tech companies

Topline Functional Score Benchmarks

4. Use your transaction data to get event-level feedback

Just because you don’t send out surveys after each event doesn’t mean you still cannot get event-level data. As you know which individuals submitted which support cases, took a training class, participated in an onboarding, etc., this data can be matched back to the survey results to provide the detailed data of how you performed on individual events. If you are using a robust survey platform, you can even take this to the next level by showing event-level questions to people who participated in training, called support, etc.

hi is it necessary that we close loop with customers who have given us low rating – detractors … to understand the reason for low rating and solving the issue ? is close looping positive wrt cost benefit analysis considering that you need to deploy teams to get in touch with customers

The short answer to your question is yes – it is important to close the loop. The best practice for this is that the survey data is imported into the CRM or Customer Success platform and then the follow ups are automatically assigned to the right person. The less sophisticated way to do it is to distribute the data to the individual Customer Success/Account Managers and ask them to follow up directly. The biggest difference between manual and automated is compliance rates by the front line personnel. When it is automated, the calls get done because there is visibility and tracking. When it is not automated, the front line staff gets to it when they have time, which for some customers can be never.

The other factor to keep in mind is that you need to follow through, not just follow up. Customers appreciate a follow up call, but unless their issues are eventually resolved, it causes customers to lose faith. We had one client that had a number of unhappy customers that they followed up with, but when we recontacted them a year later to hear how things had changed, the follow up call without follow through was just another source of dissatisfaction.