The Net Promoter Score (NPS) is a go-to customer satisfaction metric. It relies on a simple question, How likely is it you would you recommend <a product or service> to a friend or colleague? You respond on a 0 to 10 scale, and the net score is computed by subtracting the percentage of 0-6 responses, a.k.a. “detractors,” from the percentage of “promoters,” those who give a 9 or 10 rating.

NPS’s appeal is the simplicity and also the score’s widespread use, which allows for cross-industry and within-industry comparisons. The drawback, as many have noted, is opacity: NPS alone explains nothing. That’s why I’m big on survey questions that allow free-text response – there’s no substitute for asking Why did you give that score? – and on review analysis and social listening and the use of sentiment analysis techniques that link feelings to product aspects and issues. But let’s consider the opacity issue solvable and turn to proper and improper NPS use. We’ll do that via three examples…

Bad: Airbnb

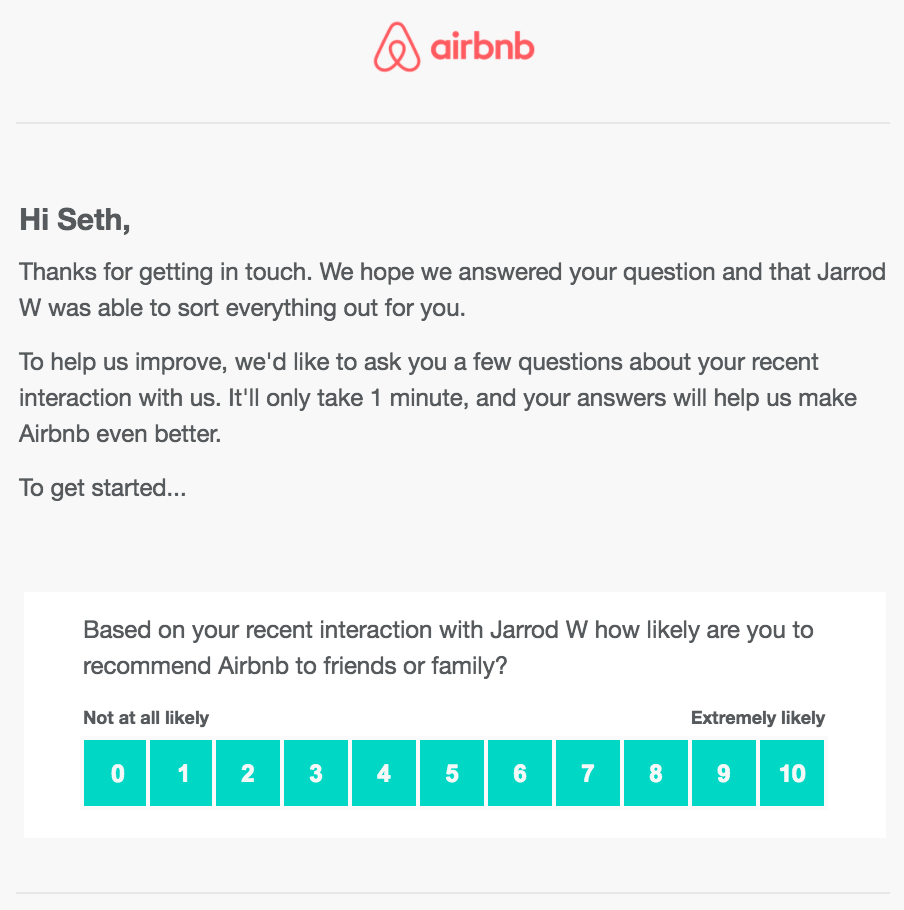

Check out this Airbnb survey I received, in response to a customer-service interaction I had in the wake of a host’s reservation cancelation two days before my arrival date:

My first reaction?

Airbnb doesn’t know whether agent Jarrod W was able to help? Really? Why not?

Actually, Jarrod W wasn’t able to”sort everything out” for me. As a result, I think that Airbnb’s resolution of issues caused by late host cancellations sucks. But I still like Airbnb overall, so here’s the dilemma: Jarrod W’s ineffectiveness won’t actually affect my likelihood to recommend, but I don’t want to imply that he was effective.

Airbnb chose the wrong measure.

Better to measure customer effort when you seek to understand service/transaction satisfaction. How hard was it to accomplish get the support you needed?

This said, I’ll relay another opinion, from Jean-François Damais, Deputy Managing Director at Ipsos Loyalty.

We found that measuring customer effort is not enough. It is the customer::company effort ratio that really matters, company effort being defined as customers’ perception of how much effort a company puts in to deal with their issues. When customers feel they work harder than companies to deal with a CX issue, churn rates and bad word of mouth are extremely high.

Bottom line: Simplistic metrics, misapplied, won’t get you far.

Better: Travelocity

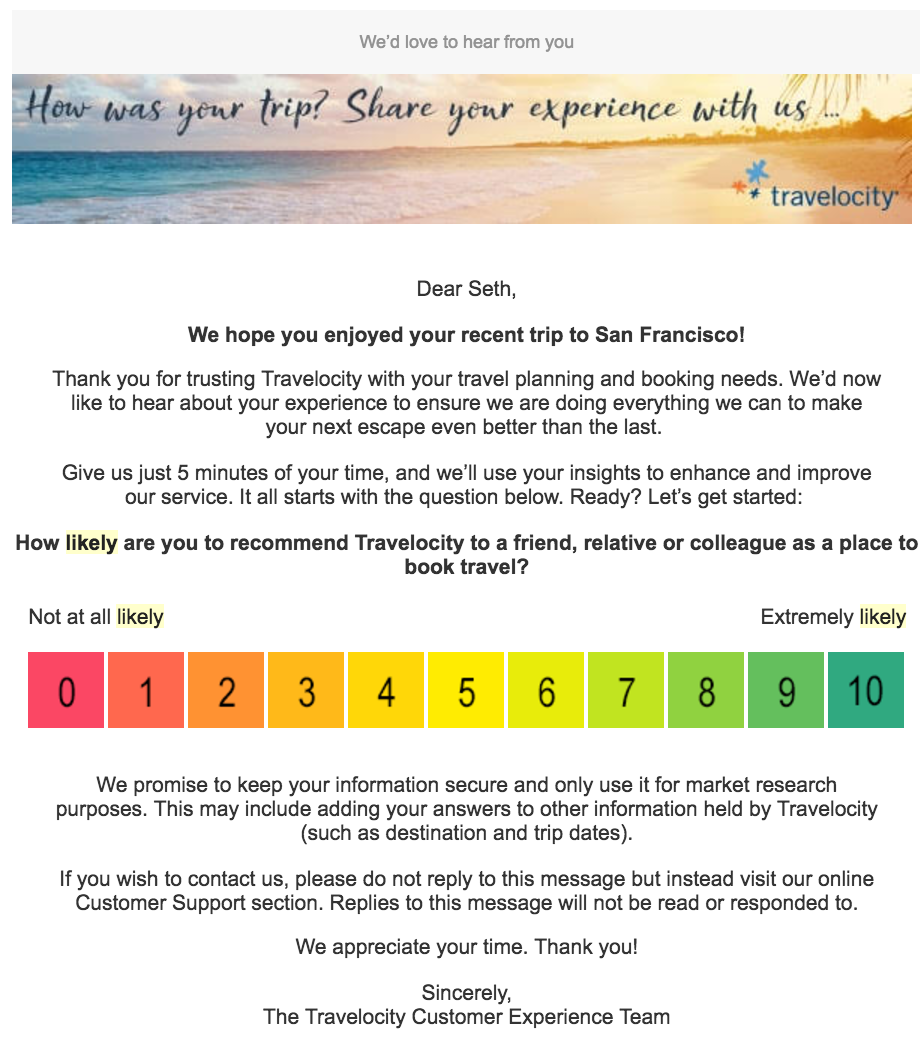

By contrast, Travelocity does it better although there’s still room for improvement. Here’s the classic NPS question, this time focused on the platform itself:

Note an extra cue given to the customer: color-coded choices add graphic appeal not present in Airbnb’s survey, without adding complexity. The flaw in this survey is, however, a missing bit of complexity. Fact is, I am unlikely to recommend Travelocity as a place to book travel because the question rarely comes up. The Airbnb survey had the same flaw. If I answered the question as asked, my response would be a 1 or 2 even though I was satisfied and would have answered 8 or 9 if asked “Are you satisfied with Travelocity travel booking?” Instead, do what Hilton does in my next example. Add a few words in order to focus the question on real-world situations.

Bottom line: Wording counts.

Best: Hilton

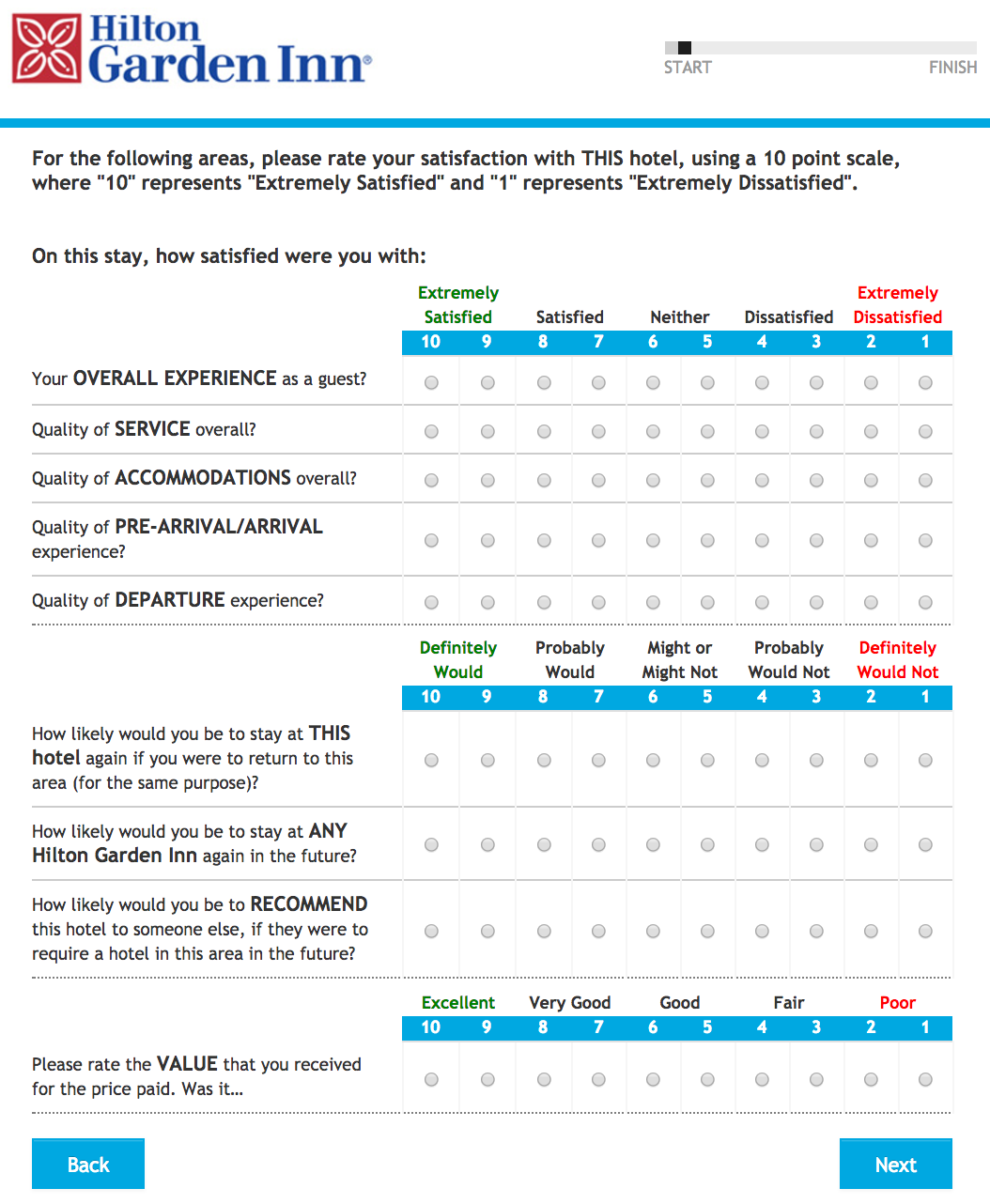

Hilton sacrifices simplicity for precision, in a survey I received recently. Check it out in the image below: Satisfaction questions and the Net Promoter “How likely would you be to recommend…” question, each type properly used. The survey finishes by asking “value that you received for the price paid.” I like that Hilton asks about perceived value per my article, Perceived Value Is Key To Customer Experience. And the use of a value ratio is in keeping with the effort-ratio guidance offered by Jean-François Damais, quoted earlier.

Here’s a shot of the Hilton survey:

One small curiosity: Hilton’s scale descends from 10 to 1 rather than, as in the class Net Promoter survey, ascending from 0 to 10. I’m guessing that the survey designers prioritized offering a single type of scale for the three types of question over using the type of scale – the number of choices and the order – convention for each survey type. Good choice. My only recommendation is to add some free-text response questions, to enable you to get at reasons and root causes that explain responses.

So in conclusion: Airbnb, don’t take my low score to mean I’m an Airbnb detractor. I’m not. Rework you’re survey approach and you’ll find that out. Travelocity, wording counts. If you don’t get it right, you risk getting distorted answers. And Hilton: Nice going. Give your customer experience/market research staff a bonus. I’d recommend them, if a business asked me who to hire to design a customer survey.

I don’t know how to answer the question about making recommendations because I’m philosophically opposed to making them. I’m not willing to lose a friendship or annoy a relative by making a recommendation that turns out to be wrong. I might like the service or product that I bought, but the person to whom I made the recommendation may buy another one that is not so good. I’m especially bothered about making recommendations about financial services where the other person’s money is at risk. As anyone who knows anything about statistics, one transaction does not provide a valid measure of a broad range of services or products from a company.

Since I take a lot of surveys, what suggestions do you have for me when I encounter this question?

Hi Seth, thanks for being a customer as well as your feedback on one of our Airbnb surveys. It looks like you are comparing apples to oranges, however. Travelocity and Hilton are measuring a trip or a stay and ours is measuring the interaction of getting help with an issue. The way your blog is written suggests that the single question embedded in the email invitation is the survey itself, but it’s only the first (and most important) one. The survey content continues after the recommend question (I imagine Travelocity’s is the same and theirs probably looks similar to Hilton’s. Our post stay survey is a bit different than either of theirs). We agree that much of the gold in a survey comes from comments, so our survey includes a comment field in addition to several other key attributes we know are important to our customers as well as our performance management purposes.

Desirree, thank you for your comment. I compared the follow-up survey messages I received from three different hospitality providers. A key point of my article is that in asking “Will you recommend us based on a customer service interaction,” Airbnb asked the wrong question. I see that you don’t dispute that point. You also don’t respond to my statement that Airbnb should have known that my customer service issue wasn’t resolved, to me a CX failure and not just a survey problem. Further, your comment exposes a repercussion: Airbnb’s NPS misuse meant that I never got to the rest of the survey that you say was beyond this first question. Will Airbnb correct these survey issues?

Further, you used your comment to defend Airbnb’s survey approach. That’s legit, but I’ll further observe that yours is the first Airbnb interaction I’ve had in response to my article, and you choose not to acknowledge the Airbnb customer service failure I reported. It’s a shame to miss a CX opportunity, especially one that’s publicly exposed.

Hi Seth, I own our NPS programs and actually did not set out to defend our approach as I do not see a need to – only wanted to point out that your comparisons are not accurate. I also tend not to dispute in a public forum, only clarify if there’s a misunderstanding. We actually get quite a lot of actionable insight from our survey. It sounds like because you did not like the way the question was worded, you did not actually see it and I’m sorry we did not get your feedback. I did not observe a ‘customer service failure’ in your blog – but rather noted that you experienced a host cancellation and did not like the policy. I am sorry you experienced a cancellation. That can be difficult. I’m more than happy to take your feedback personally, however, if you’d like to chat or reach out to me via LinkedIn.