There are a variety of markets in which vendors offer benchmarking to their customers, in order to help those customers understand how they’re doing and where they could improve. For example, in workforce management, ADP, APQC, SAP SuccessFactors, ServiceNow, Workday, and other vendors offer benchmarking products or services.

Generally, a benchmarking insight consists of 1 or 2 performance indicators and a peer group, and the insight may be expressed as a graph or picture, or as a sentence. An example sentence is “Acme Inc. has the highest employee turnover rate of any profitable manufacturer of industrial products in New England.” Customers such as Acme Inc. then consider whether and how to take action based on the insight, perhaps aided by the vendor who supplied it.

When customer benchmarking is done manually, typically a small number of insights are generated, corresponding to the customer’s stated company goals, key performance indicators (KPIs) relevant to those goals, and one or two industry peer groups. In automated benchmarking, many insights are generated because automation removes the need to select a small number of KPIs, pre-define a peer group, or even pick corporate goals (ref: Harvard Business Review). These removals lead to very numerous insights because of all the peer-group combinations, as well as the opportunity to add performance indicators that express quarterly or yearly changes, not just the last period’s results. Making use of more data and exploring more combinations require software automation.

In the ordinary customer-benchmarking use-case, insights are specific to one customer. Thirty customer insights found by software, say, aren’t too many to examine. But let’s consider the different use case of a Customer Success manager, or a regional VP, who oversees 30 customers and wishes to do proactive outreach based on the importance of the customer and significance of the insight. Now there are 30×30 = 900 insights to examine. The numbers rise when ascending to sales VP or even CEO use-cases.

What to do about this large number of insights? We see four recourses: (1) Stick to manual approaches so the problem doesn’t even arise. (2) Don’t pose queries that result in such large numbers, i.e., just analyze customers individually. (3) Arbitrarily review as many insights as time allows. (4) Automate these use-cases as well, i.e., automate the collection and ranking of insights in response to queries whose answers range over multiple customers. We’ll explore this last option in what follows.

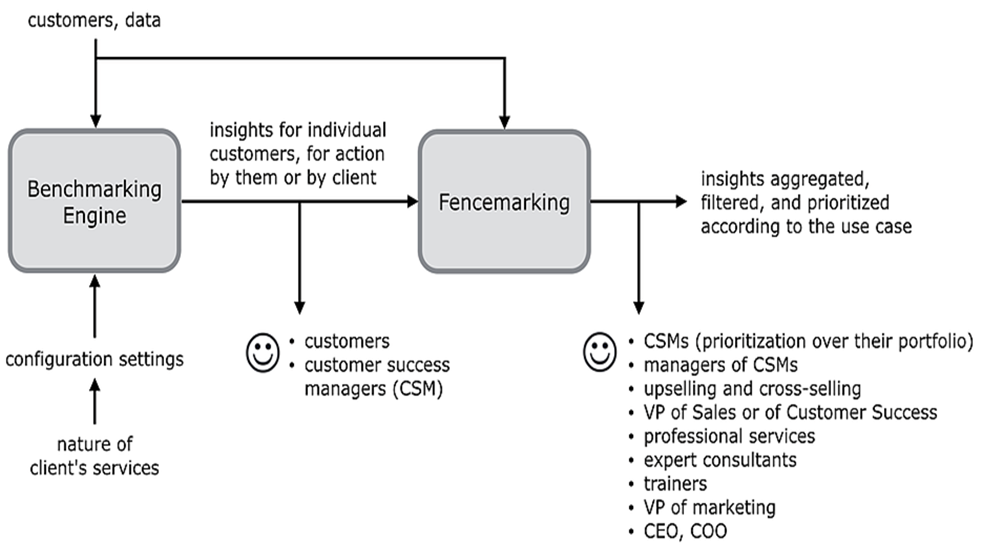

Let’s define a new task, called fencemarking, which involves forming a fence (figuratively) around some of the customers and/or some of the insights found by automated benchmarking, and then prioritizing among those fenced insights. Fencemarking addresses a variety of customer-centric use cases that go beyond consideration of a single customer.

Let’s examine some fencemarking use-cases in what follows. Each B2B use case is followed by a verbatim example of a highly-ranked insight found by benchmarking the 250 customers of a fictitious cloud-based vendor EveryDay with three product and four service offerings. The simulated, experimental dataset contains 87 customer attributes that involve customer traits, behaviors, and outcomes. The fencemarking insights are ranked by the size of the customer and the noteworthiness of the insight.

1. A Customer Success manager wants to reach out proactively to his/her portfolio of customers who have problematic circumstances, e.g., performance gaps. Across all the manager’s customers, find the top such insights.

Example: Camido Technologies has the 4th-most support tickets over the last year (44) of all the 250 customers. Those 44 represent 1.0% of the total among all 250 customers, whose average is 17.3.

2. The head of Professional Services offers consulting services for a customer problem that is revealed by a comparatively low value for metric X. Across all customers, find the top insights that mention a low X.

Example: Only Fivespan Technologies has both as low an annual contract value (ACV) ($9,500) and as low a new-hire high-performer rate (12.6%).

3. Some customers buy product HR but have low usage. Help them improve before they become dissatisfied, by offering training. Across all customers who bought HR, find the top insights that mention a low usage.

Example: Innojam Software is one of only 4 customers that got worse or bottomed out on all the HR adoption measures (there are 6 of these, and each customer needs at least 3 with actual values to qualify).

4. The VP of Marketing wants to highlight customers who get great outcomes on a metric X while using product P. Across all customers who bought P, find the top insights that mention a comparatively superior X.

Example: Trudoo Inc has the lowest employee turnover rate (0%) among all the 250 customers. That 0% compares to an average of 13.4% across the 250 customers. Coming in under the average of 13.4% corresponded to a decrease of 517.4 employee separations.

5. The Chief Customer Officer wants to review major problems of diverse types among the biggest customers. Find the top negative insights across customers who spend at least $X per year.

Example: Jetwire Corp has the 8th-biggest drop in customer health score (CHS) compared to the same quarter last year (-16.0%) among all the 250 customers. That -16.0% compares to an average of +1.1% across the 250 customers. Also, that -16.0% represents a drop from 82 to 69.

6. The VP of Sales wants to identify upselling or cross-selling opportunities among manufacturing customers. Find the top insights that mention any buying gaps across all customers in the manufacturing industry.

Example: Of the 56 customers that have at most 22.1 weeks time as an HR customer, Gabtype Tech is one of only 7 that haven’t bought either EveryDay CRM or EveryDay Analytics.

Readers can try their own queries on this experimental dataset at fencemarking.com.

Automated customer benchmarking removes the simplifying assumptions made by manual benchmarking, but then leads to an explosion in the number of comparative insights. This explosion is manageable for the ordinary use-case in which an individual examines one customer’s insights, for example to prepare for a quarterly business review. But automated benchmarking enables new use-cases which cry out for automated fencemarking. The outputs of benchmarking are the inputs to fencemarking. By sequencing automation on top of automation, a wealth of customer-centric uses can be addressed by novel, action-provoking, comparative insights, limited only by the richness of the data used for benchmarking.

Raul Valdes-Perez and Andre Lessa