Effort is the bane of your customer experience. Or, as I like to say, “Thinking is bad.” But is customer effort the right measurement to use?

Effort is the bane of your customer experience. Or, as I like to say, “Thinking is bad.” But is customer effort the right measurement to use?

First, an overview. The CEB created the Customer Effort Score (CES) as a transactional measurement. You can see my early post here. Its original phrasing was “How much effort did you personally have to put forth to handle your request?” and a lot of blogs still point to this confusing phrase. Luckily, the CEB reworded it to “The company made it easy for me to handle my issue” in the CES 2.0.

Unfortunately, they haven’t taken the next step to call it the Customer Easy Score, which is much more fun to say.

The CES is a good way to measure your transactions. Unfortunately, I’ve run across a number of organizations who want to use it as a relationship score – but it just doesn’t work in an annual measurement. For that, I recommend using the traditional “ease of doing business,” which is a broader statement, but easier (ha!) for your customers to use to rate you.

Effort = customer churn. There’s a belief out there in the Service Recovery Paradox – that if you create an issue for your customers and do a really good job of resolving their issue, they’ll be more loyal than if they never had the problem in the first place. A quick search reveals a ton of articles advocating this belief. I’ve even talked with call center managers who have an unwavering belief in its power. But it’s bogus. A meta-analysis should have put this to rest. But comforting bad beliefs die hard.

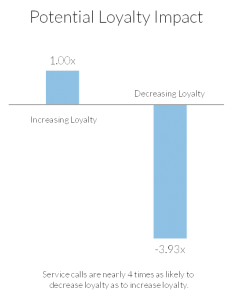

In fact, it’s silly. Do you really believe your customers will be happy being forced to call you to fix an issue, no matter what the outcome? The CEB showed the answer was clearly no. Their research (see graph on top right) showed that a support call is four times more likely to decrease loyalty than to increase it.

BT took the CES one step further, creating the “Net Easy Score.” Similar to the Net Promoter Score, they use the top 2 scores (on a 7-point scale) to represent easy, the bottom 3 as difficult, and subtract the difficult from the easy. In other words, if 40% of your customers give you a 6 or 7 (they reverse the scales) for an easy experience, and 30% give you a 1, 2 or 3, your Net Easy Score is 10 (40% – 30%). BT found that customers who gave the experience an “Easy” score were 40% less likely to churn than those who rated the experience as easy.

Is the Customer Effort or Net Easy Score right for you? It’s compelling. There isn’t one score that makes sense for everybody. But one bank found that simplicity (an alternative to easy) was their best predictor of referrals – even better than likelihood to recommend. So it’s certainly worth considering as part of your measurement strategy.

There’s a webinar below that I conducted with nanoRep where I lay out more details on measuring and reducing effort. I end that webinar with four steps to create a simpler experience:

- Measure effort. I wouldn’t use it as my only transactional measure, but include it.

- Map. If you’re getting low scores, using customer journey maps to find out why from your customer’s view.

- Target. Once you’ve mapped the experience target the top sources of effort.

- Solve. Use empathy mapping and other tools to determine how you want your customers to feel (like it was easy!) – and use this to guide your improvements.

I hope you find this advice easy to follow…

There are so many challenges associated with this service-based metric that, perhaps even more than you, I’m very much of the professional researcher/consultant camp believing that, if an organization is truly serious about optimizing customer-centricity, satisfaction, CES, and NPS as core measures have serious conceptual flaws and/or granular action limitations:

http://customerthink.com/as-the-old-expression-goes-you-can-put-lipstick-on-a-pig-but/

http://customerthink.com/is-there-a-single-most-actionable-contemporary-and-real-world-metric-for-managing-optimizing-and-leveraging-customer-experience-and-behavior/

http://customerthink.com/when-b2b-and-b2c-key-performance-metrics-flatline/

As noted in a blog response from a couple of months ago, at the very minimum, a performance metrics system should be augmented with customer advocacy and brand bonding metrics, and accompanying actionable analysis. These will help any B2B or B2C company generate stronger results for their array of customer experience initiatives.

Michael,

I certainly agree on the need to measure advocacy and other relationship components. But I’m not certain that a transactional survey is the place to conduct such a measurement.

It seems that these measures are best used in a relationship survey. I certainly recommend adding an emotional measurement component into the transactional survey, but attempting to measure the strength of a relationship in a transactional measurement introduces way too much bias.

Having been involved with customer advocacy and brand bonding behavior research for over a decade, in addition to close to more than four decades of customer experience research, I can say with certainty that advocacy research applies equally well to both transactional/tactical and relationship/strategic customer research. Emotions, brand affinity, and WOM, when combined, have a powerful impact on future customer behavior. I also understand, at a very deep and well-studied level, what these cure-all metrics purport to do, and what they actually can and can’t accomplish.

We’re dealing with customer-centric, trust-based execution, whether in the short-term or long term, and the profound effect this has on marketplace action. That’s why well-meaning measures like satisfaction, NPS, and CES are usually insufficient and frequently misleading as granular and actionable decision-making guides for most b2b and b2c companies: http://www.targetmarketingmag.com/blog/customer-centric-trust-based-relationships-humanity-emotion-profits