[Image Created By eSparkinfo.com Team]

“Learning What Not To Do Is Sometimes More Important Than Learning What To Do.”

– Rick Pitino

The above quote beautifully sums up the need of knowing what not to do in any aspect of life. The same thing applies in the technical field where sometimes knowing what shouldn’t be done is essential rather than what should be done.

If you talk about web development and club that with the above quote, then you will found that there are certain do’s and don’ts in the web development field that you need to be aware of. In the last few years, most of the business websites are being developed on the WordPress & so, it becomes important to know the Things To Do Before Launching A WordPress Website.

Now, one of the things that you need to decide before launching the WordPress website is the amount of content that you want to be indexed and the content that you need to exclude from the Google Search.

Taking this scenario into consideration, today we’re going to provide you with a list of 5 methods by which you can easily exclude the WordPress content from the Google Search. So, let’s get the things underway.

Methods To Exclude WordPress Content From Google Search

1. Using Robots.txt For Images

2. Using no-index Meta Tag For Pages

3. Using X-Robots-Tag HTTP Header For Other Files

4. Using .htaccess Rules For Apache Servers

1. Using Robots.txt For Images

For those don’t know, robots.txt is a file which is situated at the root of your WordPress website and it comprises of instructions on what to crawl and what to not for various search engines such as Google, Bing, Yahoo, etc.

If you ask any Custom WordPress Development Company about the use of a robots.txt file, then they will definitely tell you that robots.txt file is not limited traffic control and web crawling. It could also be used to prevent any WordPress website images from being crawled by the Google Bot.

Now, if you open a robots.txt file of any WordPress website, it will look like this:

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-includes/

Any standard robots.txt file generally starts with a specific instruction for the user-agent and an asterisk (*) symbol. The asterisk (*) symbol indicates that the instruction applies for the all the user-agent or bots that comes to the website.

Now, let’s analyze some ways to keep the bots away from specific digital files using the robots.txt file.

To prevent the Google Bot from crawling the PDF files, you should add the following code your WordPress website’s robots.txt file.

User-agent: *

Disallow: /pdfs/ # Block the /pdfs/directory.

Disallow: *.pdf$ # Block pdf files from all bots. Albeit non-standard, it works for major search engines.

To prevent the Google Bot from crawling the JPEG files, you should add the following code your WordPress website’s robots.txt file.

User-agent: Googlebot-Image

Disallow: /images/cats.jpg #Block cats.jpg image for Googlebot specifically.

In case, you want the Google Bot to block all the .gif images from getting indexed and show on the Google Search while allowing the other image formats such as JPEG & PNG to get indexed, you should utilize the following rules.

User-agent: Googlebot-Image

Disallow: /*.gif$

The Googlebot-Image can be used to block all the images or the images with a particular extension from appearing in Google Image Search. However, if you want to exclude the images from all kind of Google Search, then it is advisable to use the Google Bot user-agent.

Similar to Googlebot-Image, Googlebot-Video can be used to block all the videos or videos with a particular extension from appearing in Google Video Search.

However, while utilizing robots.txt file for blocking the website content, you should be aware of some of the limitations of robots.txt. They are as listed below:

- It can be applied only for well-behaved web crawlers.

- It doesn’t stop the server from sending pages & files to unauthorized users.

- Search engines could still find & index the pages if they’re linked to other site sources.

- Robots.txt is accessible to everyone anyone.

2. Using no-index Meta Tags For Pages

As we have seen in the previous method that robots.txt can’t be sure shot solution to block the website content and therefore, we need to opt for the other methods to exclude the WordPress content from Google Search.

Now, one of the methods for that could be using no-index Meta Tags for any of your website pages which you don’t want to display in the Google Search. Unlike robots.txt, this is a much better and full-proof method.

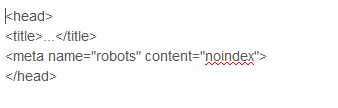

The no-index Meta Tag is placed in the section of the web pages as shown below in the coding snippet:

If you add this code in any of your pages, then it will surely not appear in the Google Search Engine. In addition to all these, you can also utilize the other directives such as nofollow & notranslate which tells the search engine crawlers not to crawl particular link and offers translation for a particular page.

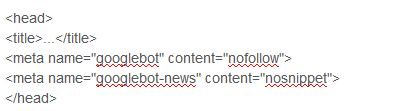

You can also instruct multiple crawlers by using multiple meta tags as shown below in snippet:

There are also two other ways to add this code to your WordPress website. The first option is to create a child theme in WordPress and then, in functions.php function make use of wp_head action to add a noindex tag as shown below in the snippet.

add_action( ‘wp_head’, function() {

if ( is_page( ‘login’ ) ) {

echo ”;

}

} );

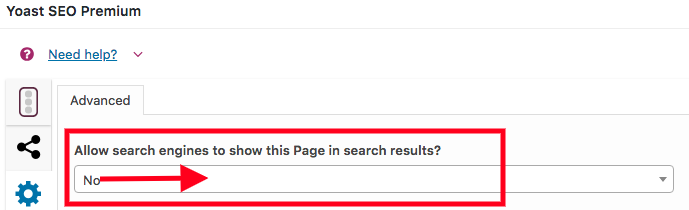

The second option is to configure the settings Yoast SEO plugin. For that purpose, move to advanced settings in Yoast SEO plugin as shown in the screenshot below.

[Image Source: https://www.wpexplorer.com/wp-content/uploads/yoast-seo-page-search-results.png ]

Now, in the advanced settings, simply choose ‘No’ option for ‘Allow search engines to show this Page in search results?’ which will tell the search engine crawlers not to crawl a particular website page.

3. Using X-Robots-Tag HTTP Header For Other Files

The X-Robots-Tag gives you more flexibility to prevent the content from getting indexed and appearing on the Google Search Results. If you compare this tag with the no-index Meta Tag, you will find out that X-Robots-Tag can be used as an HTTP header response for any URLs.

For example, you can utilize X-Robots-Tag for images, video or docs where it’s not possible to use the robots meta tag.

Here’s how you can tell the crawlers not to follow and index a JPEG image using X-Robots-Tag on HTTP header response:

HTTP/1.1 200 OK

Content-type: image/jpeg

Date: Sat, 27 Nov 2018 01:02:09 GMT

(…)

X-Robots-Tag: noindex, nofollow

(…)

Any directives which you’re using with the robots meta tag can also be used with X-Robots-Tag. Here’s how can you can instruct multiple search engine bots:

HTTP/1.1 200 OK

Date: Tue, 21 Sep 2018 21:09:19 GMT

(…)

X-Robots-Tag: googlebot: nofollow

X-Robots-Tag: bingbot: noindex

X-Robots-Tag: otherbot: noindex, nofollow

(…)

One thing that any Custom WordPress Development Company should be aware of while using X-Robots-Tag is that the bots are able to discover both Robots Meta Tag as well as the X-Robots-Tag. So, if you want the bots to follow all your instructions, then you can’t stop those pages from crawling.

In simple terms, if the page is blocked from using the robots.txt file, then your instructions for indexing will not come into play. So, always be careful while utilizing these tags.

4. Using .htaccess Rules For Apache Servers

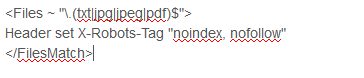

In the previous section, we have analyzed how you can use the X-Robots-Tag for preventing the content from getting indexed. However, you can also use this tag to prevent your digital contents of the website from getting indexed which are hosted on the Apache Server. Unlike no-index meta tags, the .htaccess rules can be applied on an entire website or on a particular folder.

In addition to all these, the .htaccess file supports regular expression and therefore, you can target multiple files at one go with the help of these rules.

To block the Google Bot, Bing and Baidu from crawling the entire website or a specific directory, use the following .htaccess rules:

RewriteEngine On

RewriteCond %{HTTP_USER_AGENT} (googlebot|bingbot|Baiduspider) [NC]

RewriteRule .* – [R=403,L]

To block the indexing of all .txt, .jpg, .jpeg, .pdf files across your whole website, utilize the following coding snippet:

Summing Up Things…

In the era of Digital Marketing, the race of being the no.1 in the Google Search Results has increased dramatically. Business owners nowadays only give importance to the search engine ranking and they don’t give importance to other aspects of SEO.

In doing that, most of them miss out on what content to exclude from the search engines which is also a very critical part of SEO. Taking this into account, here we have tried to provide you with a list of 4 methods by which you can easily exclude the WordPress content from Google Search.

If you have any query or suggestion regarding this blog, then feel free to ask them in our comment section. We will try to respond to each of your queries. Thank You.!