Customer experience management (CEM) programs involve the collection, analysis and dissemination of customer feedback. These customer feedback data are extremely valuable to businesses. Customer feedback data are used to help senior executives identify and improve key drivers of customer loyalty. They help call center staff immediately address specific customer issues. They help managers understand how their business unit compares with other business units. Finally, customer feedback data can help your marketing and research departments uncover deep customer insights through more sophisticated analyses of the data and linking customer feedback data to other sources of enterprise data (e.g., employee data, operational data, financial data).

The Problem of Data Errors

The value of your customer feedback data rests on their quality. Different sources of error decrease the quality of the data decreases. These errors can take different forms. I recently wrote about the quality of your customer metrics to help you select/develop customer metrics that are reliable, valid and useful. In addition to being subject to these measurement errors, your data are subject to errors due to careless handling of survey design, collection of data and coding and analysis of the data.

The problem of poor quality customer feedback data is potentially extensive when you consider the different uses of the customer feedback data across your company.

- Marketing, examining specific customers’ attitudes over extended periods of time (longitudinal analysis), simply compound data error problems, which could mask real differences or inadvertently show a relationship where none exists.

- Merging these error-ridden customer feedback data with other data sources (e.g., financial, operational, constituency) minimize the value of the entire, merged database (a major issue when dealing with “Big Data”). Data errors will limit your ability to uncover operational drivers of customer satisfaction or financial consequences of customer loyalty.

- Integration of poor customer feedback data into your customer relationship management (CRM) system/process will adversely impact account management activities (e.g., you think your contact in your Account is satisfied, when, in reality, she is not).

It is clear that the data errors can easily get compounded as more and more people use the data. Ensuring your customer data are free from errors is essential to a successful CEM program. While data errors are inevitable, they can be prevented and corrected by following a few simple rules. These rules are based on an article (Guidelines for clean data: Detection of common mistakes) published in 1986 by Patricia Smith (one of my professors in graduate school) and her research team. I try to adhere to these rules whenever I create data for my personal research or my clients’ research. I have modified the rules to be more inline with today’s digital age (she referenced the use of data cards in her article!) that focus on customer feedback data.

Rule 1: Do not Modify Standard Customer Metrics Without Clearly Indicating the Changes

Try to avoid modifying standard survey questions. Comparisons of results across similarly worded, yet different questions, need to be done carefully. Even slight wording changes to questions can have substantial effects on the meaning of the responses to those survey questions. These changes include the actual wording of the question as well as the rating scale associated with that question. For example, the “recommend” question is typically presented as “How likely are you to recommend company ABC to your friends/colleague?” How comparable is the question, “How willing are you to recommend company ABC to your friends/colleagues?” Comparing responses across these two questions is problematic. Observed differences in ratings across these two groups can not be attributed solely to any real differences in advocacy loyalty.

When you do make any changes to your survey questions or rating scales, note when those changes occurred and the exact changes that took place.

Rule 2: Design Responses With the Future Method of Analysis in Mind

Determine how you will score and analyze the data in advance of collecting the feedback. Before administering the customer survey, create your scoring key as well as the corresponding computer programming syntax you will use to analyze the data. Consider conducting a pilot survey to ensure the questions and responses make sense to the customers. Analyze that pilot data using the aforementioned computer programming syntax to see if the analyses run smoothly without error.

Rule 3: Get Deeply Involved in the Data Management Process

Data management involves different employees/experts across the data collection, analysis and reporting efforts. The survey vendor collects the customer feedback and codes the responses. Those data are sent to your internal team for further coding (e.g., creating metrics) and deeper statistical analysis. Those data, in turn, are used by your IT department for integration into your existing business systems. Each time the data are handled, another opportunity for error is introduced into your customer feedback data.

Your team needs to work together seamlessly to ensure the data maintains its integrity throughout this process. Educate the entire team about each step of the data management process (from data collection to analysis and reporting). Share these rules with them and let them regularly discuss the processes and any changes in the data management process.

Understand the mindset of the customer by completing the survey yourself. Examine the raw customer feedback data to understand how the customer responses correspond to the numerical values in the data. For example, you could find that all verbal responses to survey questions (e.g., answering “Primary Decision Maker” to the survey question,”What is your decision-making role?”) could be stored as numerical values (e.g., “Primary Decision Maker” is stored at the number 3 in the data set).

Rule 4: Check Scoring Key Against Customer Survey

The scoring key for your survey translates the customer responses into meaningful values that you will use to make interpretation of the results. Be sure the scoring key matches the actual customer survey, item by item, exactly. This rule is especially important to follow when you are making changes to your survey over time (adding new questions/response options, removing other questions/responses options). Including one or two survey questions that have been inadvertently placed into different parts of the new survey will result in wrong translated values if you use the scoring key from the first survey.

Rule 5: Recode Items that Measure the Same Characteristic in the Same Direction

For example, when scoring responses for “likelihood to switch to another provider” and “likelihood to renew service contract,” interpretation is clearer if a high score on both questions means higher levels of customer loyalty (e.g., not likely to switch and likely to renew). The relationship of these two types of customer retention metrics with customer satisfaction is expected to be positive (higher levels of satisfaction lead to higher levels of retention loyalty) and the use of this rule will minimize any misinterpretation of the meaning of high or low values on the metric.

Rule 6: Transform Item Values to Match the Scoring Key

Be sure the customer responses precisely match the scoring key. An 11-point scale is typically used when measuring customer loyalty. The values for this scale are typically coded from 0 (not at all likely) to 10 (extremely likely). Applying incorrect values to the responses (e.g., using 1 to 11 instead of 0 to 10), will impact the results. For example, I had a client who uses their survey vendor’s site to generate averages for each survey question. These site-generated averages were higher than the averages I had calculated using my statistical software package. After a little investigation, I discovered the survey vendor inadvertently coded the loyalty responses as 1 (not at all likely) to 11 (extremely likely) instead of 0 to 10, thus inflating their calculation of the averages.

Rule 7: Assign Unique Numbers to Missing Values to Distinguish Them From Other Responses

Missing responses need to be coded with unique numbers that distinguish it from other responses. Be sure not to use the missing value codes to be used in the calculation of summary statistics (e.g., mean, median, variance). Including missing values into statistical calculations can lead to inflated correlation coefficients among the impacted variables.

Rule 8: Make Sure Data Sets are Comparable Before Aggregating Them into a Larger Set

Before combining different data sources, compare means, standard deviations, and distributions of each data set. We know that extreme differences across groups will distort your analyses on the overall data set. Combining data sets with widely different mean values could distort estimates of correlations, for example. Correlations may be artificially inflated when extreme samples are combined. A good example is one in which customer satisfaction results from different computer manufacturers are combined into one overall data set. Including Apple’s customers’ responses (typically highly satisfied) with other manufacturer’s customers’ responses (typically average) will inflate the correlation between, say, customer satisfaction and customer loyalty metrics.

Rule 9: Label Compulsively

Create a spreadsheet that contains all information for each variable in your customer feedback data set. Using the rules above, here is what I list, at a minimum for each survey questions: 1) entire survey question, 2) response options (what survey taker sees), 3) corresponding values for each response option (used for calculations), 4) changes, if any, to question. Some other pertinent information about the entire data you may want to include (in case those data are not part of the customer feedback process) are date that the data was collected, and job level of respondents, job role.

When labeling on the pertinent information about your customer data set, err on the side of overdescription. You will be glad you did.

Rule 10: Backup your Data

If you ever want to see me cry over lost data, you won’t… anymore. On a dedicated, separate hard drive, I back up all my raw data and the labeling information about the data set. Be sure to create a backup of your data and the pertinent information about the data. A backup will save you time and, yes, tears. Incorporate these 10 rules with your company’s standard data backup rules regarding company data.

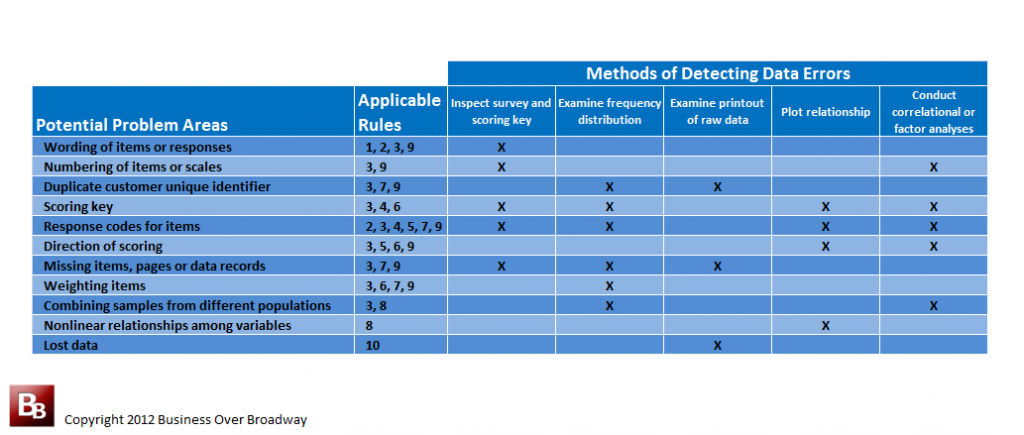

Table 1. Some potential problem areas, corresponding rules and methods to detect data errors. Click image to enlarge

Detecting Data Processing Errors

You spend a lot of resources (human and financial) to gather and process customer feedback data. Table 1 includes a few potential problem areas around the quality of customer feedback data along with different ways you can detect data errors and the applicable rules to follow to minimize or prevent the errors.

These methods of detecting data errors range from simply examining the survey form and raw data to analyzing the data to detect impossible responses.

Summary

A successful CEM program requires customer feedback data that are free from errors. Data processing errors can seriously impact the quality of your customer data. This post presented 10 rules to minimize and prevent data processing errors along with methods used to detect data errors. Following these simple rules and applying these data error detection methods will go a long way in preventing future headaches for anybody using your customer feedback data.

Does your company have formal data processing rules for your CEM program? If you have any additional insights about other data processing rules not included here, please share them!