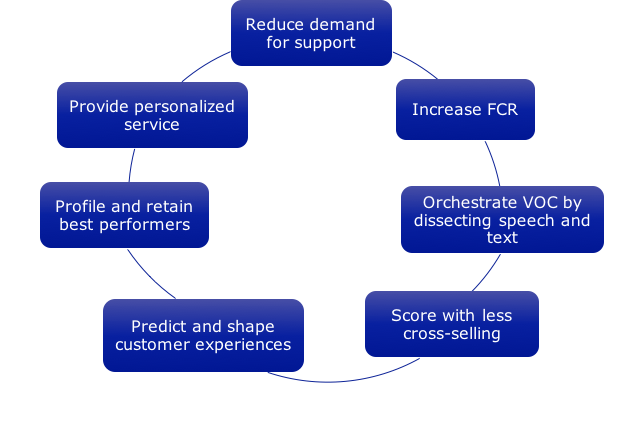

Building on my April 2018 column “Using AI, Bots, Big Data, and Analytics to Reduce Demand for Support”1, one of the seven vexing challenges facing customer experience (CX) leaders, today I’ll address the second challenge to increase First Contact Resolution (FCR).

The power of AI, bots, Big Data, and analytics can now enable us to address the overall goal “How can we create and sustain a consistent and awesome customer experience across multiple channels & touch points?” and thereby increase sustainable revenues, realize higher margins, and sustain greater levels of customer satisfaction and loyalty.

Let’s dig into the second challenge, Increase FCR.

Study after study has confirmed that first contact resolution in the contact center and other support functions is the biggest driver of customer satisfaction2. However, most companies rely overly much on point statistics like average FCR, and they neglect the fact that customers today start their inquiry or search for support online, failing that in the IVR system, only to find themselves speaking, emailing, or chatting with a customer service agent. Think about that: If the customer is unable to get what they want online, and in the IVR, then to them interacting with an agent is the 3rd contact = very dissatisfying, and costly!

Wouldn’t it better and more satisfying to customers to understand FCR at the agent level (which agents are resolving contacts more than others, and which ones are not, producing repeat contacts)? And to figure out which issues are harder to resolve the first time, producing repeat contacts? And to analyze FCR where the customer starts, at the web site, and resolve as many of those interactions as possible? This last point also relates to my earlier column to reduce demand into the contact center, and ties neatly in my 1st book The Best Service is No Service3 and key parts of my 2nd book Your Customer Rules!4

In this post I won’t address how to define and improve IVR containment since there are plenty of other places to find out how5. Some of the reasons why it’s so hard to increase FCR, especially on the omni-channel basis I’ve proposed, includes:

(1) The channels are usually “owned” by different groups or functions;

(2) Channel development is not coordinated;

(3) Channel reporting is similarly uncoordinated, housed in different databases or repositories;

(4) There is no single agreed upon definition of FCR; and

(5) We too often rely on “averages” for FCR, as for many other metrics like handle time (AHT), instead of drilling down to the agent level or to the issue level.

#5 is compounded by the lack of data that is granular enough to predict performance as agents join, are trained, or leave, and as issues or reasons become more or less complex.

However, it’s becoming easier to tackle these five reasons and track sustained increases in FCR by using AI, bots, Big Data, and analytics. I’ll review five steps that address this key question: “How can we anticipate repeat contacts; resolve them completely the first time; and focus on the issues, reason codes, and agents that are starting the repeat contact process (or, creating “Snowballs” that roll downhill), and on the processes and agents that are able to resolve repeat contacts (or, “melt the Snowballs”)?”

Step 1 = Define FCR in each channel and across channels.

As noted there’s no consistent definition for FCR, and what is bandied around is called something different by channel (“containment” for web support and IVR support, FCR average in the contact center). Let’s first use the same term for repeat, unresolved contacts (Snowballs); start where the customer starts, on the web site; and dispatch with averages to get down to agent and issue levels. We can define FCR several ways, the most popular being “the same customer did not contact us again within 7 days for the same issue”; however, the “contact” typically cited is in the contact center, not across all the channels nor starting on the web. Therefore, let’s define FCR as “the same customer did not contact us again within 4 days for the same issue in any channel”, a tighter timeframe equating with customers’ greater levels of impatience.

Step 2 = Collect FCR data points at the agent and issue levels.

This step leveragle the power of Big Data to “mash up” a myriad of data sources, and sort out the ones with the highest levels of predictive value. Here is where we knock down “average” FCR and overall FCR across all issues; instead, we need to collect data points across all channels, agents, and issues, with issues = a limited set of “reason codes” in the customer’s language like “where is my refund?” or “how can I get another filter?” You will discover that the web site’s FCR is shockingly low (we often see average web support FCR around 30%!), meriting close attention to make web support dead simple. You will discover that some issues have a very low FCR, perhaps because of confusing policies (see Step 4), and some might be close to 100% FCR, meriting less attention to fix.

By constructing an input-output table that shows which agents resolve issues, and which ones do not, you can finally get past the averages and roll up agents into their teams to produce supervisor-based FCR results. Here you will see that some agents’ FCR is under 50%, pulling down the overall average FCR, perhaps because of inadequate training (see also Step 4), and some are almost perfect at 100%, meriting your attention to be sure to hold onto them.

Step 3 = Visualize FCR data at the agent and issue levels.

Now comes the fun part … loading the granular FCR data points into clear and actionable models. For several years now my team has been building these models in tools like Microsoft Power BI, Qlik, or Tableau, and there are other visualization tools available. It’s best to combine inputs, trend views, and “owner” displays, with owners = the executives charged with developing or improving processes and systems that impact FCR.

Step 4 = Test predictive models to increase FCR.

By testing some of the hypotheses discovered in Step 2, using AI you can find the cause and effect of probable drivers for FCR such redesigned web support pages, new training, simplified knowledge sharing pages, and feedback to the agents (so that each one can see how their work affects customer satisfaction). You can also begin predicting which customers and issues are likely to be Snowballs, enabling workforce management to route them to more specialized agents with proven skill to melt Snowballs.

Step 5 = Celebrate successes (and push down the accelerator)!

In this final step you will be able to recognize agents able to resolve more contacts than send Snowballs careening downhill, developers able to increase web FCR, and visualization experts able to bring all of this into clear focus. You can then learn from these successes, build upon them, and enter into the virtuous circle of continuous improvement.

By following these five steps and using AI, bots, Big Data, and analytics you will increase FCR and increase customer satisfaction. You will also:

- Avoid the plague of “averages” with more precise data points at the agent and issue levels;

- Reduce customer support costs;

- Engage the “owners” who are causing the issues to dig into the drivers and pursue the best action.

Notes:

1http://customerthink.com/using-ai-bots-big-data-and-analytics-to-reduce-demand-for-support/ posted 20 April 2018.

2 “For every 1% improvement in FCR, there is a 1% improvement in Csat (top box response). Clearly, FCR is highly correlated to Csat. In fact, of all the contact center internal or external metrics, FCR is the metric with the highest correlation to Csat. The absence of FCR is the strongest driver of customer dissatisfaction. In fact, as previously mentioned, Csat (top box response) drops, on average, 15% every time a customer has to call back to get their initial call resolved. In other words, if a customer had to call in three times to get their call resolved their Csat (top box response) would be 30% lower than a customer who had their call resolved on the first call.” In https://www.sqmgroup.com/consulting/fcr-improvement-plan accessed 8 August 2018.

3The Best Service is No Service: How to Liberate Your Customers From Customer Service, Keep Them Happy, and Control Costs Bill Price & David Jaffe (Wiley 2008). Based partly on my years as Amazon’s 1st WW VP of Customer Service, but also on “Best Service” providers around the world who have made it easier for their customers to do business with them, we proposed 7 Drivers that start with “Challenge demand for service”:

- “Eliminate dumb contacts”

- “Create engaging self-service”

- “Be proactive”

- “Make it really easy to contact your company”

- “Own the actions across the company”

- “Listen and act”

- “Deliver great service experiences”

4Your Customer Rules! Delivering the Me2B Experiences That Today’s Customers Demand (Wiley/Jossey-Bass 2015). Here are the 7 Customer Needs that Lead to a Winning “Me2B” Culture; each Need breaks down into a total of 39 Sub-Needs.

- “You know me, you remember me”

- “You give me choices”

- “You make it easy for me”

- “You value me”

- “You trust me”

- “You surprise me with stuff that I can’t imagine”

- “You help me better, you help me do more”

5 Here’s one of many resources out there: https://www.plumvoice.com/resources/blog/what-to-know-about-ivr-containment-rates/ accessed 8 August 2018

Valuable tips on increasing First contact resolution and providing consistent excellent customer service. Great post, thank you!

Thanks, Nathan. It’s hard to provide consistent excellent customer service, and FCR (not starting snowballs, and then if they happen, melting them) is a solid way to deliver.

Hi Bill: using your definition of FCR “the same customer did not contact us again within 4 days for the same issue in any channel”. I am not sure how prevalent the following situations are, but could a company receive a wrong signal if

a) the helpline solution wasn’t helpful, and the customer subsequently went online and received a more useful 3rd party solution – or hack – that fixed the problem (I’ve done this many times).

b) the customer got frustrated with the product and the answers the company provided about using it, and returned the product, replacing with one from a competitor.

In both instances, FCR would appear favorable, but the actual outcome for the vendor would not be.

Good points here, Andy, and both ought to be factored into the calculus. (a) requires “omni-channel” tracking that I have recommended since the company needs to know that the customer service agent/call center solution wasn’t sufficient. (b) is quite important, and here’s where Big Data can be powerful by collecting “down stream” events like product exchanges, reductions in the rates of purchase, cancelled contracts, and other marketing or sales data. I like to use a balanced scorecard for FCR with more weight for “what happened to the customer?” after the contact.

Valuable tips on increasing First contact resolution and providing consistent excellent customer service. Great post, thank you! It’s hard to provide consistent excellent customer service, and FCR (not starting snowballs, and then if they happen, melting them) is a solid way to deliver.

The power of AI, bots, Big Data, and analytics can now enable us to address the overall and thereby increase sustainable revenues, realize higher margins, and sustain greater levels of customer satisfaction and loyalty.