(Part 2 of an Attensity/text analytics update. Click to read part 1, Attensity Doubles Down: Finances and Management.)

Attensity ex-CEO Kirsten Bay’s LinkedIn profile states her Attensity objective as “develop go-to-market strategy to reorient corporate focus from social media, text analytics to corporate intelligence.” A shift of this sort — a step up the value ladder, from technology to solutions — seems sensible, yet Attensity has gone in a different direction. Since Bay’s December 2013 departure, the company has instead doubled-down on a technology-centered pitch, placing its positioning bet on algorithms rather than on business benefit.

We read on Attensity’s Web site — the first sentence under About Attensity (as of August 14, 2014) — “Our text analytics technologies use patented, state-of-the-art semantic approaches to extract and recall information into valuable insights.” Other main-page tag-lines: “Using semantic analysis to extract textual insights” and “Enabling customer insights for social and non-social data.” There are variations on this sort of language throughout Attensity’s marketing collateral.

A tech-centered pitch is great if you’re marketing to developers, to a market segment that knows it needs “semantic approaches.” A tech pitch may also appeal to insights professionals, to market researchers and consultants. But for a business exec who’s looking to boost customer satisfaction, engagement, and loyalty, for competitive advantage? Perhaps not so compelling.

In an article that preceded this one, I characterized Attensity’s May 2014, $90 million financing announcement as a doubling down, in both investment and technical positioning. The earlier article covered Attensity’s financial and management picture. This second one focuses on positioning and prospects, with a few words on Attensity Q, a new “easy-to-use real-time intelligence” solution for marketers.

Go Your Own Way

In my earlier article, I offered the impression that Attensity’s business performance, measured in financial and competitive terms, has been stagnant in recent years. Attensity’s Aeris Capital owners evidently agreed: Ex-CEO Kirsten Bay, in describing her Attensity assignment in her LinkedIn profile (as of the moment I’m writing this article) uses “restructure” and “recapitalize” twice each. Recapitalize? Done, although it’s unclear where the $90 million is going, or went. Restructure? I don’t know what steps have been taken beyond the hiring of a new marketing head, Angela Ausman, and the reversion to tech-centric market messaging. The company has declined to discuss its product roadmap.

Attensity’s message is certainly different from its nearest competitions’, who have had greater market and corporate success. Among business solutions providers —

Medallia has invested heavily in text analytics, maintaining, however, a pitch built around business benefit: “understand and improve the customer experience.” Clarabridge repositioned several years ago as a customer experience management (CEM) solution provider; you won’t find “text analytics” on the main page of Clarabridge’s Web site. Kana (owned by interaction-analytics leader Verint) sells “customer service software solutions.” Confirmit bought text analytics provider Integrasco earlier this year, but eschews the “text analytics” label in favor of a functional description of the capability provided: “Discover insights in free-form content.” Pegasystems focuses on improving customer-critical business processes, with text analysis capabilities enhanced via the May 2014 MeshLabs acquisition but still playing a supporting role.

Attensity could do likewise: Contextualize its text analytics technology by repositioning as a business solutions provider. Attensity certainly does understand the importance of context, because “context” (along with “insights”) is the part of the company’s pitch that best bridges tech and business benefit.

Context and Sense

In some of its more-recent material, Attensity has termed itself “the leading provider of corporate insight solutions based on proprietary data contextualization.” See, for example, the Attensity Q announcement.

I asked Attensity its definition of “data contextualization,” but again, the company declined to take up my questions. So I’ll give you mine: The notion that accurate data analysis accounts for provenance (identity, demographic and behavioral profile, and reliability of the source), channel (e.g., social media, surveys, online reviews, contact center), and circumstances (location, time, and activity prior, during, and after a data point) among other factors. There’s a word that describes data context — metadata — so what’s different is a dedication to better use it in analyses.

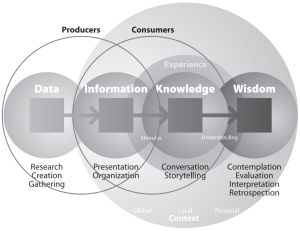

Authorities such as IBM’s Jeff Jonas have written (virtual) reams about context. See, for instance, Jonas’s G2 | Sensemaking -– Two Years Old Today. Other vendors have made the case for context. One pitch: “Digital Reasoning uses machine learning techniques to accumulate context and fill in the understanding gaps.” I’ll present to you an illustration that dates back two decades from Nathan Shedroff’s 1994 Information Interaction Design: A Unified Field Theory of Design.

Gary Klein, Brian Moon, and Robert R. Hoffman wrote in 2006 about sensemaking embodied in intelligent systems that “process meaning in contextually relative ways,” that is, relative to the data consumer’s situation. “Data contextualization,” as I understand it, makes explicit an extension of Shedroff-type models into the data producers’ realm, to better power those sought-after intelligent systems. The concept isn’t new (per IBM, Digital Reasoning, SAS’ s Contextual Analysis categorization application, and other examples), even if the Attensity messaging/focus is.

How has Attensity implemented “data contextualization”? I don’t know details, but I do know that the foundation is natural language processing (NLP).

Attensity NLP Based Q

“The strongest and most accurate NLP technology” is Attensity advantage #1, according to marketing head Angela Ausman, quoted in a June Agency Post article, Attensity Q Uses NLP and Visualization to Surface Social Intelligence From the Noise. Attensity advantage points #2 and #3 are development experience and “deep breadth of knowledge in social and engagement analytical solutions,” setting the stage for introduction of Attensity Q.  Ausman cites unique capabilities that include real-time results; alerting; “quotable metrics for volatility, sentiment, mentions, followers, and trend score”; and term-completion suggestions, via a visualization dashboard.

Ausman cites unique capabilities that include real-time results; alerting; “quotable metrics for volatility, sentiment, mentions, followers, and trend score”; and term-completion suggestions, via a visualization dashboard.

Attensity Q comes across as designed for ease-of-use and sophistication (which are not necessarily mutually exclusive categories) beyond the established Attensity Analyze tool’s. Attensity Pipeline‘s real-time social media data feed remains a differentiator, as do the NLP engine’s exhaustive extraction voice-tagging capabilities and the underlying parallelized Data Grid technology.

But none of this, except for Q, is new, so is any of it, Q included, enough to support a successful Attensity relaunch? The question requires context, which I’ve aimed to supply. Its answer depends on execution, and that’s CEO Howard Lau’s and colleagues’ responsibility. I wish them success.

Disclosure: Attensity sponsored 3 instances of a conference I organize, the Sentiment Analysis Symposium, most recently in the fall of 2012, and the company was a 2011 sponsor of my text analytics market study. A new version of that study is out, Text Analytics 2014, available for free download, sponsored by Digital Reasoning among others. And IBM’s jStart innovation team sponsored my 2014 sentiment symposium.